How to Extend LLM Memory Beyond the Context Window Limit

Extending LLM memory beyond context windows requires layered approaches combining KV cache optimization, external memory systems, and hybrid retrieval. Leading solutions like DuoAttention reduce memory usage by 2.55× while systems achieving 90%+ accuracy on LongMemEval implement persistent storage, temporal reasoning, and automatic knowledge updates across sessions.

Key Facts

• Context windows are stateless input buffers that reset with every API call, not true memory systems

• Commercial LLMs show 30% accuracy drops when processing information across sustained interactions exceeding 115k tokens

• KV cache compression techniques like RocketKV achieve up to 400× compression ratios with minimal accuracy loss

• External memory architectures maintain state across sessions through persistent storage, selective retention, and temporal chaining

• Leading memory systems score 90-95% on LongMemEval benchmarks compared to 60-70% for standard context-only approaches

• Production implementations combining semantic caching, hybrid retrieval, and persistent memory cut token usage by 50% while maintaining accuracy

Extending LLM memory is bigger than adding tokens. Developers building production AI agents must learn how to extend LLM memory with layered retrieval, semantic caching, and external memory so models remember well beyond a single context window. This guide breaks down why models forget, which techniques actually work, and how to ship memory-first systems that improve over time.

Why Do LLMs Forget Past Conversations?

Large language models treat each API call as a blank slate. "The context window is not a memory system - it's a stateless input buffer that resets with every API call." This means every token of conversation history, system prompt, and retrieved document competes for the same fixed budget.

Context windows determine how much text your LLM can actually process at once. The context window is the total number of tokens an LLM can process in a single request: your system prompt, retrieved documents, conversation history, and generated response all share that budget.

The core limitation comes from transformer architecture. Transformers compare every token to every other token to understand relationships. Double your context and you quadruple the work. This quadratic scaling creates hard ceilings on what models can practically remember.

Modern models advertise massive limits:

Model | Context Window |

|---|---|

GPT-4 (8K variant) | 8,192 tokens (32K and 1M variants also available) |

Claude 3.5 Sonnet | 200,000 tokens |

Gemini 1.5 Pro | Up to 2,000,000 tokens |

Context windows have expanded by roughly two orders of magnitude since the original transformer architecture. Yet bigger windows alone do not solve the memory problem.

Does a Larger Context Window Fix Memory—Or Make It Worse?

Simply expanding context windows introduces hidden trade-offs that can degrade production performance.

Latency increases dramatically. More tokens means more work. One study found over 7x latency increase at 15,000 words of context. The computational cost scales with token count, making response times unpredictable under load.

Accuracy degrades in the middle. The lost-in-the-middle problem is well-documented: models pay more attention to information at the beginning and end of long contexts, often missing what's buried in the middle. Liu et al. demonstrated in a peer-reviewed TACL 2024 paper that LLMs show >30% accuracy degradation when relevant information is positioned in the middle of a long context.

Cost scales linearly. Every additional token costs money. Sending full conversation histories every turn can reach $3.20 per conversation for complex interactions.

Benchmarks confirm the gap. LongMemEval tests five core long-term memory abilities across 500 curated questions with chat histories averaging 115k+ tokens. Commercial chat assistants and long-context LLMs show a 30% accuracy drop on memorizing information across sustained interactions.

Key takeaway: Effective context length - the range where the model maintains strong performance - can be much shorter than the advertised maximum.

Which Techniques Actually Extend LLM Memory?

Three solution classes address the memory gap: optimizer-level KV tricks, external memory layers, and hybrid retrieval systems.

Retrieval Augmented Generation (RAG) has emerged as a solution, enhancing LLMs by integrating real-time data retrieval to provide contextually relevant and up-to-date responses. Agentic RAG transcends traditional limitations by embedding autonomous AI agents that dynamically manage retrieval strategies and iteratively refine contextual understanding.

Researchers have also introduced the Continuum Memory Architecture (CMA), a class of systems that maintain and update internal state across interactions through persistent storage, selective retention, associative routing, temporal chaining, and consolidation into higher-order abstractions.

Semantic caching provides another layer. A novel cross-region knowledge caching architecture achieves up to a 3.6× increase in throughput by maintaining cache hit rates over 85% while preserving accuracy virtually identical to non-cached baselines.

Optimizer-Level KV Tricks

KV cache compression tackles the memory bottleneck at the model level without requiring retraining.

DuoAttention identifies that only a fraction of attention heads - termed Retrieval Heads - are crucial for processing long contexts. Streaming Heads focus on recent tokens and can operate with reduced cache. This approach reduces inference memory by up to 2.55× for MHA and 1.67× for GQA models while speeding up decoding by up to 2.18× and 1.50× respectively, with minimal accuracy loss. DuoAttention enables Llama-3-8B decoding with 3.3 million context length on a single A100 GPU.

RocketKV implements a two-stage compression strategy. The first stage performs coarse-grain permanent KV cache eviction. The second stage adopts a hybrid sparse attention method for fine-grain top-k sparse attention. RocketKV provides a compression ratio of up to 400×, end-to-end speedup of up to 3.7×, and peak memory reduction of up to 32.6% on an NVIDIA A100 GPU, with negligible accuracy loss across long-context tasks.

External Memory Layers & Knowledge Graphs

External memory systems decouple storage from the model's context window.

Temporal Knowledge Graphs address temporal reasoning challenges. Temporal Knowledge Graphs capture vast amounts of temporal facts in a structured format, offering a reliable source for temporal reasoning. MemoTime decomposes complex temporal questions into a hierarchical Tree of Time, enabling operator-aware reasoning that enforces monotonic timestamps. It achieves state-of-the-art results, outperforming baselines by up to 24.0% on temporal QA benchmarks.

Graph-based memory layers like Zep's Graphiti engine dynamically synthesize both unstructured conversational data and structured business data while maintaining historical relationships. Zep achieves accuracy improvements of up to 18.5% while reducing response latency by 90% compared to baseline implementations.

Continuum Memory Architecture goes beyond stateless RAG. While RAG treats memory as a stateless lookup table where information persists indefinitely and retrieval is read-only, CMA maintains and updates internal state across interactions through persistent storage, selective retention, associative routing, temporal chaining, and consolidation into higher-order abstractions.

How Do You Measure Long-Term Memory Quality?

Benchmarks provide objective yardsticks for memory system performance.

LongMemEval evaluates five core long-term memory abilities: information extraction, multi-session reasoning, temporal reasoning, knowledge updates, and abstention. The benchmark includes 500 meticulously curated questions embedded within freely scalable user-assistant chat histories.

The categories matter because they represent production-critical failure modes:

Information extraction: Can the system recall specific facts?

Multi-session reasoning: Can it connect facts across separate conversations?

Temporal reasoning: Does it handle "before" and "after" relationships correctly?

Knowledge updates: When facts change, does it return current information?

Abstention: Does it refuse to answer when information is unavailable?

Leading systems show significant variation. OMEGA achieved 95.4% overall accuracy with 466/500 correct answers. Multi-session reasoning remains the hardest category at 83% - connecting facts across separate conversations requires deep retrieval.

Cortex achieved 90.23% accuracy on LongMemEval-s, the highest reported score for memory-enhanced systems, with particularly strong performance in temporal reasoning, knowledge updates, and user preference understanding.

Key takeaway: Context windows test recall; LongMemEval tests memory. Systems that score well on simple context benchmarks often fail when information spans sessions or requires temporal awareness.

Choosing a Memory Layer: Cortex vs. OMEGA vs. Zep

Selecting a memory layer depends on your production requirements: benchmark performance, deployment model, and integration complexity.

Capability | Cortex | OMEGA | Zep |

|---|---|---|---|

LongMemEval Score | 90.23% | 95.4% | 71.2% |

Architecture | Self-improving retrieval + memory | Hybrid semantic + BM25 | Temporal knowledge graph |

Deployment | Cloud | Local (SQLite) | Cloud/Self-hosted |

Latency (P95) | <200ms | ~1,500 tokens injected | <200ms |

A real-world case study illustrates the stakes. One team saw service usage increase 30x in two weeks. Context retrieval latency spiked from a P95 of 200ms to over 2 seconds. After optimization, their graph returned results in 150ms (P95). They cut LLM token usage in half while maintaining accuracy above 80% on the LongMemEval benchmark.

Zep provides complete context engineering combining agent memory, Graph RAG, and automated context assembly. Zep demonstrates superior performance with 17% higher accuracy and 60% faster retrieval compared to alternatives on the LoCoMo benchmark.

OMEGA runs entirely local with SQLite - no external services or GPU required. It uses bge-small-en-v1.5 via ONNX Runtime for CPU-only embedding. Memories evolve, get consolidated, and decay over time to prevent unbounded growth.

Cortex provides a self-improving retrieval engine that automatically adapts to your data, your users, and your application's behavior. The platform supports Mixture-of-Experts Routing, memory injection, and metadata-driven retrieval for precision filtering.

Implementation Checklist: From PoC to Production

Moving from prototype to production requires systematic integration of memory components.

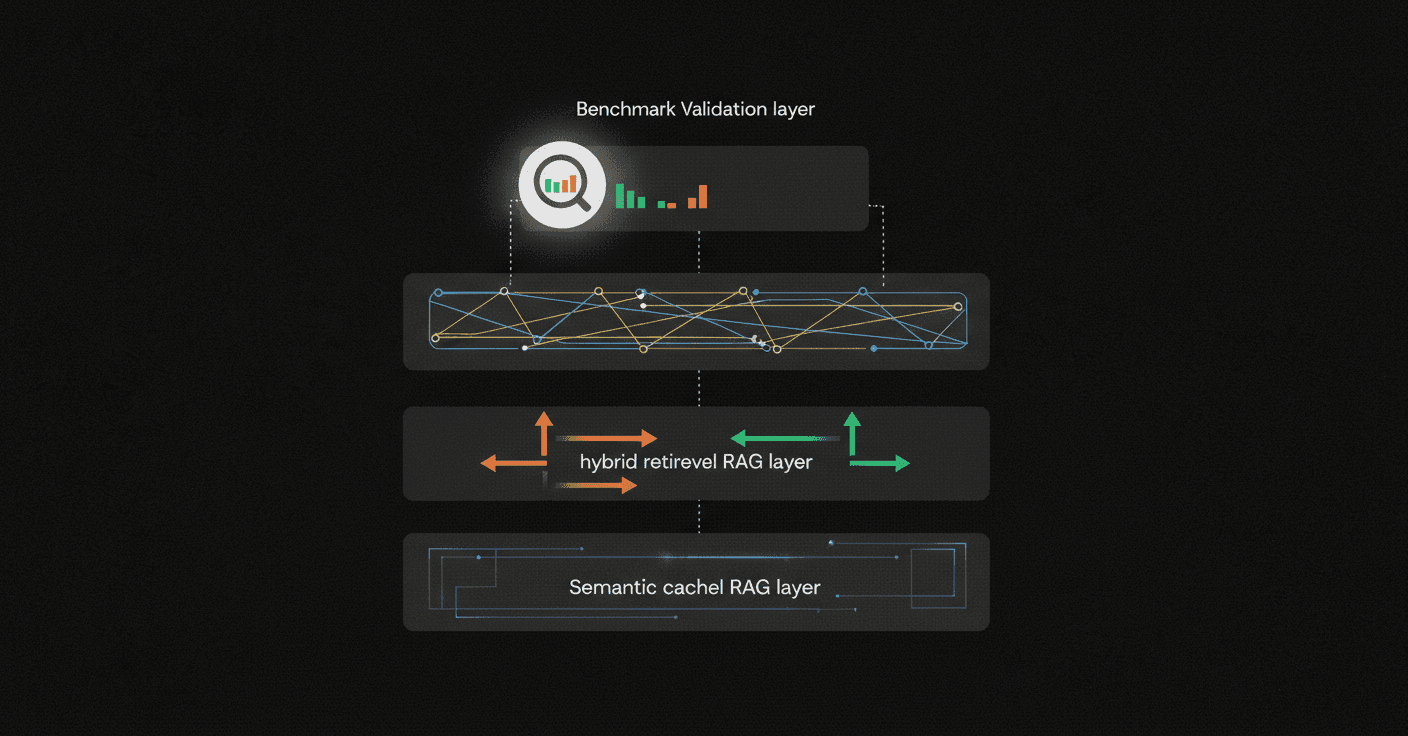

Step 1: Add semantic caching first.

Semantic caching can significantly reduce API calls and improve response latency. Unlike traditional caching which requires identical queries, semantic caching matches semantically equivalent queries regardless of phrasing.

Production implementations tune similarity thresholds for precision and recall balance, implement adaptive TTLs and smart eviction, monitor hit/miss patterns continuously, and pre-warm high-value entries.

Step 2: Integrate RAG with hybrid retrieval.

Hybrid retrieval combining keywords for precision and vectors for recall outperforms either approach alone. RAG-powered systems help reduce customer support response times while improving accuracy and scalability.

Redis LLMCache provides a mechanism to store and retrieve context data efficiently, allowing LLMs to access information beyond their immediate context window. The module supports various data types and integrates with existing LLM workflows.

Step 3: Add persistent memory for multi-session continuity.

Memory transforms LLMs from stateless processors into stateful assistants capable of learning and adapting over time. Connect your memory layer to:

User preferences and history

Session-level facts and decisions

Cross-session knowledge updates

Temporal relationships between events

Step 4: Validate against benchmarks.

Run your implementation against LongMemEval or LoCoMo before production deployment. Focus on the hardest categories: temporal reasoning and multi-session consistency.

Key Takeaways: Designing Memory-First LLM Systems

Extending LLM memory requires architectural thinking beyond token limits:

Context windows are not memory. They reset with every API call. Treat them as a budget, not a storage system.

Bigger windows add latency and cost without solving forgetting. The lost-in-the-middle problem means accuracy degrades even when information fits.

Layer your optimizations. Combine KV cache compression (DuoAttention cuts memory 2.5×), external memory layers (temporal knowledge graphs, CMA), and hybrid retrieval or semantic caching.

Measure what matters. LongMemEval tests real memory capabilities across sessions. Systems showing 30% accuracy drops on sustained interactions need architectural fixes, not bigger windows.

Memory enables personalization. Cortex memories update automatically through conversation, queries, and usage, giving applications the ability to learn, remember, and adapt over time.

Teams shipping production-grade AI agents need memory that persists, reasons temporally, and improves with use. The fragmented stacks of vector databases, custom chunking logic, and prompt tricks give way to integrated memory layers that handle ingestion, search, personalization, and answer generation out of the box. The result is AI that becomes more accurate, more personalized, and more useful over time - without infrastructure babysitting.

Ready to build agents that truly remember? Cortex provides the production-ready memory layer described above - try it free to see the 90.23% LongMemEval advantage in your own stack.

Frequently Asked Questions

Why do LLMs forget past conversations?

LLMs treat each API call as a blank slate, with the context window acting as a stateless input buffer that resets with every call. This means all conversation history, system prompts, and retrieved documents compete for the same fixed token budget, leading to memory limitations.

Does a larger context window solve memory issues?

While larger context windows allow more tokens, they introduce trade-offs like increased latency, accuracy degradation in the middle of contexts, and higher costs. Effective context length is often shorter than the maximum advertised, as models struggle with information buried in the middle of long contexts.

What techniques can extend LLM memory?

Techniques like Retrieval Augmented Generation (RAG), Continuum Memory Architecture (CMA), and semantic caching can extend LLM memory. These methods enhance memory by integrating real-time data retrieval, maintaining internal state across interactions, and improving throughput with high cache hit rates.

How does Cortex improve LLM memory?

Cortex provides a self-improving retrieval engine that adapts to data, users, and application behavior. It supports memory injection and metadata-driven retrieval, enabling AI systems to remember prior interactions, adapt responses, and maintain continuity across sessions.

What is LongMemEval and why is it important?

LongMemEval is a benchmark that evaluates long-term memory abilities like information extraction, multi-session reasoning, and temporal reasoning. It tests memory capabilities across sessions, highlighting systems that can handle complex, sustained interactions effectively.