How Do You Extend Memory in Consumer AI Apps?

Extending memory in consumer AI apps requires implementing a dedicated, persistent memory layer that stores, retrieves, and updates user information independently of context windows. Leading architectures like Mem0 achieve 26% higher accuracy than OpenAI's memory while reducing computational costs by 90% through structured extraction, consolidation, and selective retrieval mechanisms that maintain conversational coherence across sessions.

At a Glance

• Context windows have hard limits (8K-2M tokens) causing models to forget earlier interactions, while commercial assistants show 30% accuracy drops in sustained conversations

• Successful memory architectures like Mem0 and EverMemOS implement dual-phase pipelines that extract, consolidate, and retrieve information, achieving 91% lower latency than full-context methods

• Practical implementation starts with 400-800 token chunks with 20% overlap for RAG systems, then adds working memory buffers and episodic logs for longer tasks

• Graph-based memory variants outperform base configurations by 2% by storing memories as directed labeled graphs capturing complex relationships

• Security requires zero-trust access controls, BYOK encryption, and audit-ready logs for compliance with memory systems storing sensitive user data

• Benchmarks like LongMemEval test memory across 115,000-1.5M tokens, revealing top systems achieve 90%+ accuracy while reducing token usage by over 90%

Extending memory in AI apps means moving beyond a single prompt window to a dedicated, durable memory layer that scales with user history and product growth. When users interact with your AI assistant dozens or hundreds of times, the model needs to remember what matters without stuffing everything into an ever-growing context window. This post walks through why memory matters, what limits you will hit, which architectures actually work, and how to implement memory that drives real personalization.

Why Does Memory Matter for Consumer-Grade AI?

Consumer AI apps live or die by how personal they feel. A shopping assistant that forgets your size preferences, a travel planner that asks for your home airport every session, or a learning app that restarts from scratch each day will frustrate users and erode retention.

"Large Language Models (LLMs) have demonstrated remarkable prowess in generating contextually coherent responses, yet their fixed context windows pose fundamental challenges for maintaining consistency over prolonged multi-session dialogues."

The core problem is straightforward: models have hard token limits. Gemini 1.5 Pro supports 2M tokens, Claude 3.5 Sonnet allows 200K, and GPT-4 ranges from 8K to 128K depending on the variant. When context exceeds the limit, models either truncate older messages or slide the window forward, literally forgetting earlier information.

Most discussions of production agents focus heavily on context window size, but that framing is incomplete.

The real challenge is designing systems whose correctness and performance do not depend on fitting all relevant data into the model at once. Memory is not an afterthought; it is the architectural primitive that lets your AI remember users across sessions, adapt to their preferences, and deliver the personalized experiences that keep them coming back.

Key takeaway: Without a dedicated memory layer, consumer AI apps become stateless utilities that feel generic no matter how sophisticated the underlying model.

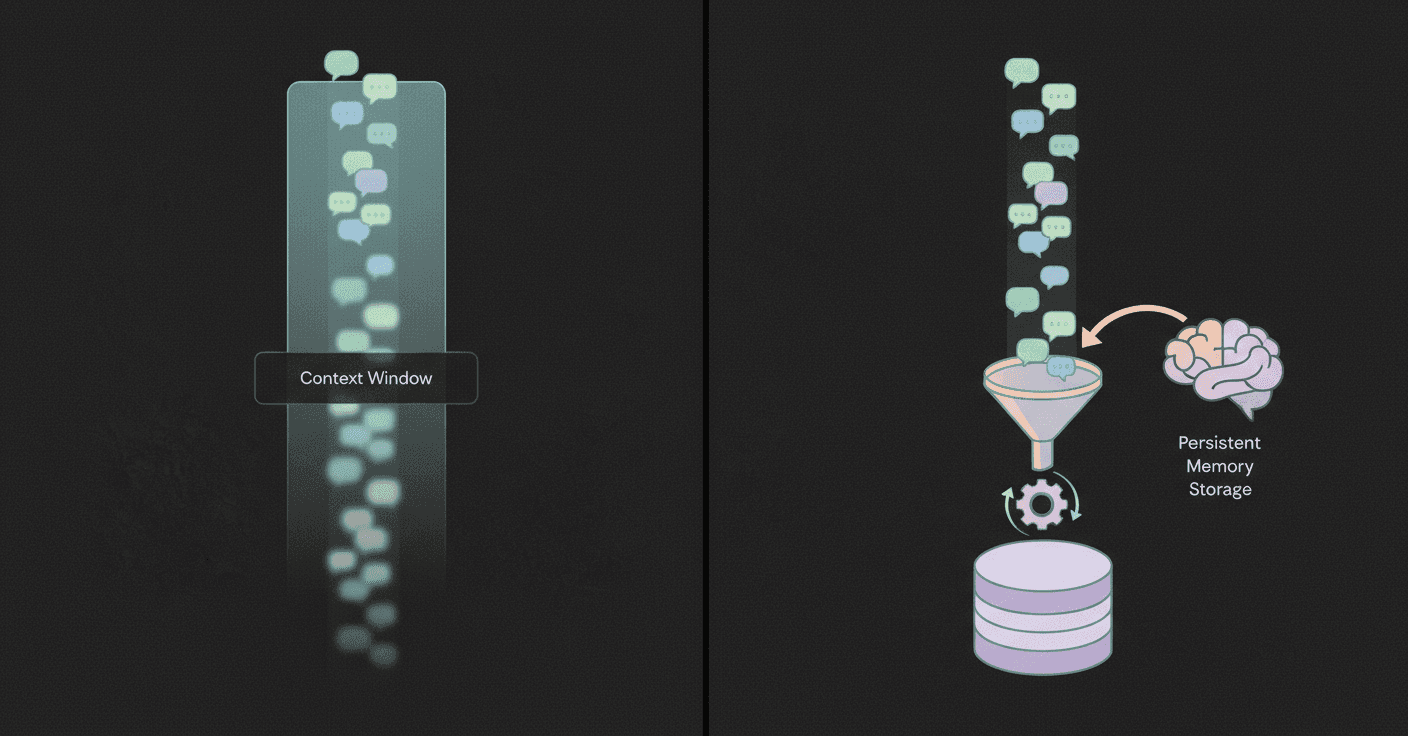

What Are the Limits of Context Windows and Stateless Designs?

Even as context windows expand, they introduce new problems.

First, retrieval-augmented generation (RAG) has become the default strategy for providing LLM agents with contextual knowledge. But "RAG treats memory as a stateless lookup table: information persists indefinitely, retrieval is read-only, and temporal continuity is absent." This means RAG alone cannot track how a user's preferences evolve over time or handle conflicting facts from different sessions.

Second, benchmarks reveal how badly existing systems struggle. No LLM consistently outperforms others across all memory dimensions. Extensive evaluation shows that agent memory mechanisms do not necessarily enhance LLMs' capabilities and often exhibit notable efficiency limitations. Commercial chat assistants and long-context LLMs show a 30% accuracy drop on memorizing information across sustained interactions.

Third, cost and latency scale with context length. Longer context means higher API costs and slower responses. For consumer apps where every millisecond and cent counts, stuffing everything into the prompt is not sustainable.

The bottom line: stateless designs hit a wall. To build AI that remembers, you need an external memory layer that stores, updates, and retrieves information independently of the prompt.

Which Architectures Really Add Memory to LLM Agents?

Research over the past two years points to a handful of winning patterns for long-term memory.

These patterns share three traits: they are structured, persistent, and retrieval-aware.

"Our findings highlight critical role of structured, persistent memory mechanisms for long-term conversational coherence, paving the way for more reliable and efficient LLM-driven AI agents."

HyMem, for example, adopts a dual-granular storage scheme paired with a dynamic two-tier retrieval system. A lightweight module constructs summary-level context for efficient response generation, while an LLM-based deep module is selectively activated only for complex queries. Experiments show HyMem achieves strong performance on both the LOCOMO and LongMemEval benchmarks, reducing computational cost by 92.6% while maintaining accuracy.

EverMemOS takes a different approach, implementing an engram-inspired lifecycle for computational memory. Experiments on LoCoMo and LongMemEval show that EverMemOS achieves state-of-the-art performance on memory-augmented reasoning tasks.

Mem0 & Graph-Based Variants

Mem0 is a scalable memory-centric architecture that dynamically extracts, consolidates, and retrieves salient information from ongoing conversations. The pipeline consists of two phases: Extraction and Update, ensuring only the most relevant facts are stored and retrieved.

Mem0 consistently outperforms six leading memory approaches, achieving 26% higher response accuracy compared to OpenAI's memory, 91% lower latency compared to full-context methods, and 90% savings in token usage.

Mem0 with graph memory achieves around 2% higher overall score than the base Mem0 configuration by storing memories as directed labeled graphs with entities as nodes and relationships as edges. This structure captures complex relational information that flat vector stores miss.

On the security front, Mem0 is SOC 2 compliant and HIPAA-ready, designed with zero-trust access controls and BYOK encryption for enterprise deployments.

EverMemOS & Continuum Memory

EverMemOS organizes memory into three stages:

Episodic Trace Formation: Converts dialogue streams into MemCells that capture episodic traces, atomic facts, and time-bounded Foresight signals

Semantic Consolidation: Organizes MemCells into thematic MemScenes, distilling stable semantic structures and updating user profiles

Reconstructive Recollection: Performs MemScene-guided agentic retrieval to compose the necessary and sufficient context for downstream reasoning

The Continuum Memory Architecture (CMA) formalizes these principles, defining "a class of systems that maintain and update internal state across interactions through persistent storage, selective retention, associative routing, temporal chaining, and consolidation into higher-order abstractions." CMA is a necessary architectural primitive for long-horizon agents, although challenges around latency, drift, and interpretability remain.

Experiments on LoCoMo and LongMemEval show that EverMemOS achieves state-of-the-art performance on memory-augmented reasoning tasks, validating the engram-inspired approach.

How Do You Implement Memory: From RAG to Knowledge Graphs

Implementing memory in production requires practical engineering patterns, not just theoretical frameworks.

Chunking matters. Document-aware chunking that preserves tables, code blocks, and headers can improve domain-specific accuracy by 40%+. For most production RAG systems, recursive chunking with 400-800 token chunks and 20% overlap provides the best balance of performance and efficiency. Semantic chunking outperforms fixed-size methods by 15-25% in retrieval accuracy but costs 3-5x more computationally.

Memory compression is essential. SimpleMem, an efficient memory framework based on semantic lossless compression, achieves an average F1 improvement of 26.4% in LoCoMo while reducing inference-time token consumption by up to 30-fold.

Memory injection is where many systems fail. "Injection is where many systems fail: old memories become 'too strong,' or malicious text gets injected." Memory systems are orchestration pipelines, not just model behaviors. You need memory distillation to extract high-quality, durable signals from conversations and memory consolidation that runs asynchronously at the end of each session, graduating eligible session notes into global memory when appropriate.

Memory Type | Purpose | Lifecycle |

|---|---|---|

Working Memory | Current prompt, tool outputs | Single turn |

Short-term Memory | Recent messages, thread context | Single session |

Long-term Memory | Stored knowledge, past interactions | Cross-session |

Episodic Memory | Timeline of events, decisions | Persistent |

Preference Memory | User settings, constraints | Persistent |

Start with stateless RAG to de-risk and ship. When tasks span hours or days, add a small working-memory buffer and an episodic log for continuity and replay.

How Does Memory Drive Real-Time Personalization?

Memory is the foundation for personalization that actually works.

Cortex includes memory that improves over time, learning user preferences for formats, content types, and question styles to create personalized, habit-forming experiences. The system learns how individual users behave, what formats they prefer, and how they usually ask questions. Over time, this builds a sticky experience that keeps users coming back.

The business impact is measurable. Companies using AI-driven personalization see a 20% boost in sales and up to 25% better campaign performance within months. AI identifies patterns in customer data, like browsing habits or purchase history, to predict what someone needs at any moment.

AI memory systems excel at:

Real-time insights: Updating customer profiles instantly, enabling quick responses to cart abandonment or preference changes

Unified data: Combining data from all channels to create a single, accurate customer profile

Predictive analytics: Anticipating customer needs and behaviors for proactive engagement

Enhanced personalization: Delivering tailored experiences based on behavior, sentiment, and context

Key takeaway: Memory transforms AI from a generic assistant into a personalized collaborator that remembers user preferences and adapts over time.

Which Benchmarks Prove Your Memory System Works?

LongMemEval is the industry standard for evaluating long-term interactive memory. It measures five key memory abilities: information extraction, multi-session reasoning, temporal reasoning, knowledge updates, and abstention.

The standard LongMemEvalS configuration contains histories of approximately 115,000 tokens per instance, while LongMemEvalM features up to 500 sessions and 1.5 million tokens per instance. This scale reflects real-world conditions where users interact with AI assistants over months or years.

Commercial assistants struggle badly. Accuracy decreases by 30-60% compared to an oracle retrieval setting where the model receives only the relevant evidence. This gap reveals how much existing systems lose to noise, drift, and retrieval failures.

Top-performing systems on LongMemEval include:

System | Overall Score | Multi-Session | Temporal Reasoning |

|---|---|---|---|

OMEGA | 83% | 94% | |

Cortex | 90.23% | 76.69% | 90.97% |

Supermemory | 85.2% | 71.43% | 76.69% |

Memory-augmented approaches reduce token usage by over 90% while maintaining competitive accuracy. This efficiency matters for production systems where cost and latency are critical constraints.

Episodic memory can help LLMs recognize the limits of their own knowledge, which is crucial for avoiding hallucinations and knowing when to abstain from answering.

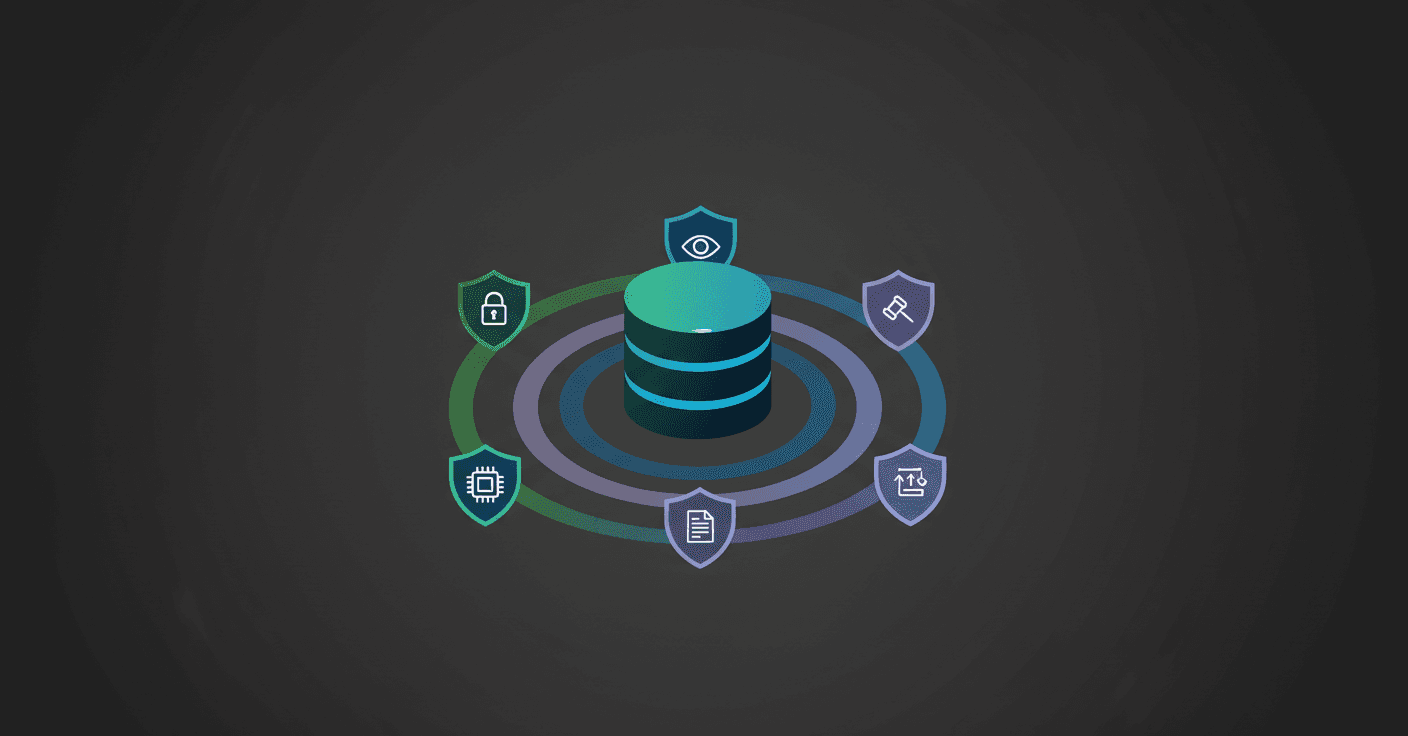

Security, Governance & User Trust

Memory systems store sensitive user data, making security and governance non-negotiable.

Security at Mem0, for example, is multi-layered and proactive. "From zero-trust access controls and BYOK encryption to real-time monitoring and incident response, every layer is built to prevent and contain risk."

The MemTrust architecture provides a hardware-backed zero-trust approach with cryptographic guarantees across five layers: Storage, Extraction, Learning, Retrieval, and Governance. Evaluation shows MemTrust achieves less than 20% performance overhead on enterprise workloads while providing local-equivalent confidentiality.

Agentic commerce is accelerating this shift. Dynamic planning with real-time adjustment refers to the ability of agentic systems to offer an end-to-end dynamic customer experience with real-time updates and adjustments. New protocols like the Agent Payments Protocol (AP2) and Model Context Protocol (MCP) are emerging to enable secure, verifiable interactions between AI agents and external systems.

Key governance requirements:

Audit-ready logs for compliance and debugging

Encrypted storage at rest and in transit

Tenant isolation for multi-user applications

User controls for data access, export, and deletion

Engineering Checklist: Extending Memory in Your App

Use this checklist to implement memory in your AI application:

Step | Action | Details |

|---|---|---|

1 | Choose memory architecture | Start with stateless RAG; add working-memory buffer and episodic log as tasks grow |

2 | Implement smart chunking | Use 400-800 token chunks with 20% overlap; preserve document structure |

3 | Add metadata labeling | Tag memories with timestamps, source, and confidence scores |

4 | Build memory distillation | Extract high-quality signals from conversations into durable memory |

5 | Create consolidation pipeline | Graduate session notes to global memory asynchronously |

6 | Monitor retrieval precision | Track recall and NDCG; use fact-augmented key expansion for indexing |

7 | Implement decay and conflict resolution | Memories should evolve, consolidate, and decay over time with transparent auditing |

8 | Add verification loops | "Verification is non-optional for reliable output" |

The core framework rests on five pillars: proactive context and memory management across sessions, token optimization through model selection and tool choice, verification loops to ensure quality, deliberate parallelization only when necessary, and investing in reusable patterns that compound in value over time.

Conclusion: Designing Memory as a First-Class Feature

Memory is not a feature to bolt on later. It is the architectural primitive that separates production-grade AI agents from demos.

The research is clear: structured, persistent memory mechanisms dramatically improve long-term conversational coherence while reducing costs by 90% or more. Systems like Mem0, EverMemOS, and Cortex demonstrate that purpose-built memory architectures outperform naive context stuffing across every dimension that matters.

Cortex isn't just about storing context. It learns from every interaction and gets smarter over time. For teams building AI agents where accuracy, latency, personalization, and long-term learning matter, memory-first platforms provide the infrastructure to ship production assistants in days, not months.

The choice is simple: build on a memory layer designed for scale, or keep re-inventing the same fragile stack that breaks as your user base grows.

Frequently Asked Questions

Why is memory important for consumer AI apps?

Memory is crucial for consumer AI apps because it allows the AI to remember user preferences and interactions across sessions, providing a personalized experience that enhances user retention and satisfaction.

What are the limitations of context windows in AI models?

Context windows in AI models have fixed token limits, which can lead to truncation of older information and hinder the model's ability to maintain consistency over prolonged interactions. This limitation necessitates the use of external memory layers for effective long-term memory management.

How does Cortex improve AI memory systems?

Cortex enhances AI memory systems by providing a self-improving retrieval and memory layer that integrates with various data sources, enabling persistent memory, personalization, and temporal reasoning, which are essential for production-grade AI applications.

What are some effective architectures for adding memory to AI agents?

Effective architectures for adding memory to AI agents include structured, persistent, and retrieval-aware systems like HyMem and EverMemOS, which use dual-granular storage and engram-inspired memory lifecycles to enhance long-term conversational coherence and efficiency.

How does memory drive real-time personalization in AI?

Memory enables real-time personalization by learning user preferences over time, allowing AI systems to deliver tailored experiences based on individual behavior, sentiment, and context, which can significantly boost user engagement and business outcomes.

Sources

https://developers.openai.com/cookbook/examples/agentssdk/contextpersonalization/

https://www.averi.ai/guides/how-ai-powers-real-time-marketing-personalization

https://www.averi.ai/guides/how-ai-memory-personalizes-customer-journeys

https://www.ais.com/practical-memory-patterns-for-reliable-longer-horizon-agent-workflows/

https://www.usecortex.ai/blog/how-to-refresh-or-update-stored-llm-memory-2026-guide

https://www.xarticle.news/article/tech-data/the-longform-guide-to-everything-claude-code