How to add persistent LLM memory to your GPT bot: Developer guide

To add persistent memory to a GPT bot, integrate a vector database like Qdrant for retrieval, implement hybrid episodic-semantic memory architecture, and use tools like Cortex for plug-and-play infrastructure or open-source Mem0 which achieves 91% lower p95 latency. This combination enables bots to recall user preferences, maintain conversation context beyond token limits, and personalize responses over time.

Key Implementation Steps

• Choose your vector database: Qdrant delivers highest RPS and lowest latencies, while Milvus excels at indexing speed for large-scale deployments

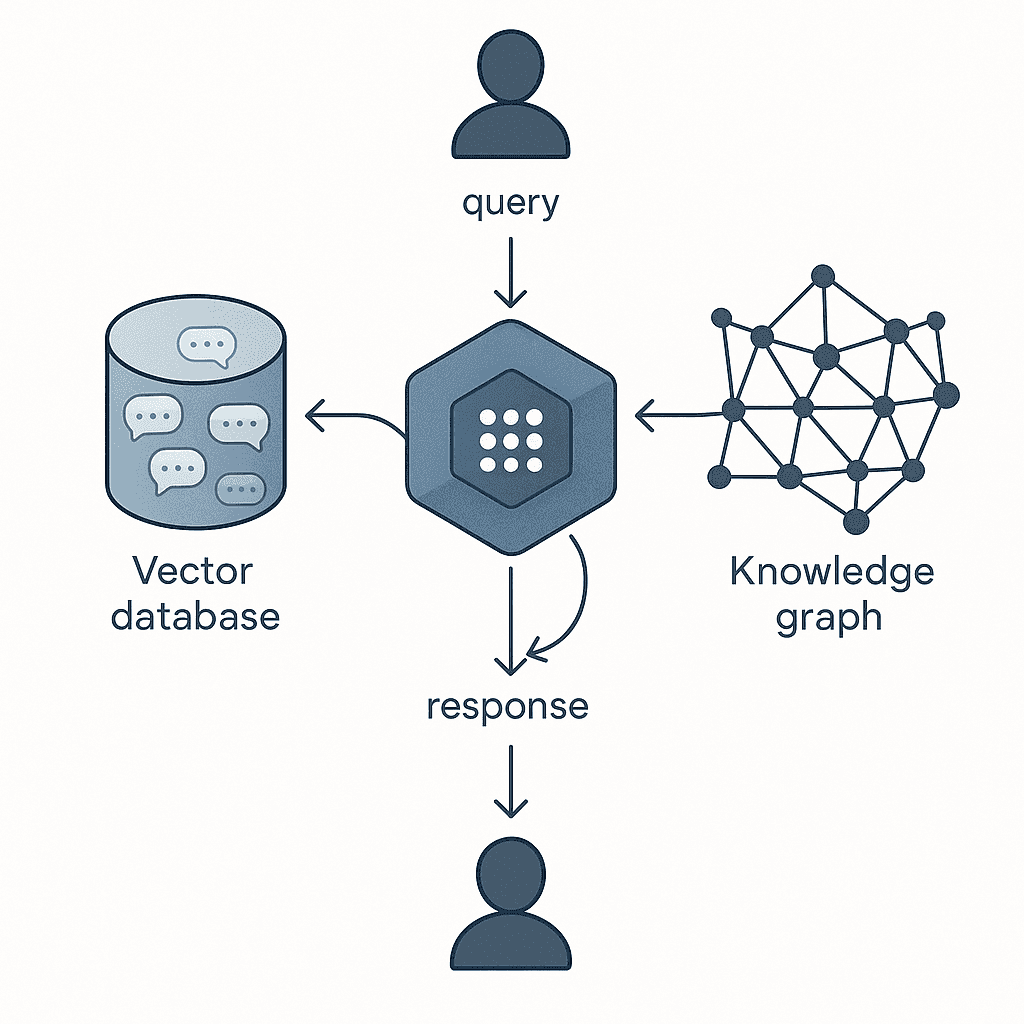

• Implement hybrid memory: Combine episodic memory for event-specific data with semantic memory for persistent facts and knowledge graphs

• Select integration approach: Use Cortex SDK for turnkey solution with built-in long-term memory or open-source Mem0 for 26% improvement over baseline OpenAI implementations

• Monitor critical metrics: Track factual consistency, sub-2s p95 latency, and token efficiency to prevent context poisoning and memory inflation

• Add personalization hooks: Store user preferences, behaviors, and history to enable adaptive responses that improve with each interaction

Users expect chatbots to remember them. They want a bot that recalls their name, preferences, and the context of last week's conversation. Yet transformers forget everything outside a prompt window. This guide shows developers how to bolt persistent LLM memory onto a GPT bot and avoid the usual scaling traps.

Why GPT bots need persistent memory

"Large Language Models (LLMs) have demonstrated remarkable prowess in generating contextually coherent responses, yet their fixed context windows pose fundamental challenges for maintaining consistency over prolonged multi-session dialogues." - arXiv

Context is like short-term RAM. Memory is like a persistent database. When a conversation exceeds the model's token limit, older exchanges vanish.

The bot then forgets user preferences, repeats questions, and contradicts previously established facts. This gap matters because most multi-agent AI systems fail not because agents can't communicate, but because they can't remember. Without a structured memory layer, even well-engineered bots break down after a handful of sessions.

Memory architectures: episodic, semantic & hybrid patterns

Modern memory systems for AI agents draw on cognitive science. Two primary patterns dominate:

Episodic memory stores event-specific data, including temporal and contextual details.

Semantic memory stores factual knowledge, rules, and concepts independent of specific events.

Agents with both episodic and semantic memory exhibit superior performance in dynamic environments and tasks demanding both context-specific recall and general knowledge.

Research confirms the value of hybrid approaches. One architecture combines episodic and semantic memory components with a proactive "Intelligent Decay" mechanism, yielding superior task completion rates, contextual consistency, and long-term token cost efficiency. Current large language model chatbots make factual errors up to 42% of the time when generating biographies, underscoring the need for external memory.

Episodic memory stores

Episodic memory captures event-level details. Common tools include:

Vector databases that store conversation snippets as embeddings

Timestamped logs that preserve temporal context

Session-based caches that group interactions by user or thread

Episodic memory stores event-specific data, including temporal and contextual details. This allows a bot to recall what happened in a specific conversation, not just what it knows in general.

Semantic & knowledge-graph stores

Semantic memory encodes enduring facts. Knowledge graphs represent information as nodes (entities) and edges (relationships), providing structured storage for semantic and episodic memories.

Mem0g extends this foundation by incorporating graph-based memory representations, where memories are stored as directed labeled graphs with entities as nodes and relationships as edges. This structure captures richer, multi-session relationships and improves temporal reasoning.

Which vector database best fits your memory layer?

Selecting the right vector database depends on your workload. The table below summarizes key trade-offs:

Database | Strengths | Weaknesses |

|---|---|---|

Qdrant | Highest RPS, lowest latencies across most scenarios | Fewer managed hosting options |

Milvus | Fastest indexing time, good precision | RPS and latency lag with high-dimensional embeddings |

Redis | Good RPS at lower precision | Latency increases quickly under parallel requests |

Elastic | Improved speed | Indexing can be 10x slower (32 min vs 5.5 hrs for 10M+ vectors) |

Weaviate | Stable | Minimal improvement in recent benchmarks |

Qdrant achieves highest RPS and lowest latencies in almost all scenarios, regardless of precision threshold or metric choice.

Why many teams pick Qdrant

Qdrant uses a different approach to filtering, not requiring pre- or post-filtering while addressing the accuracy problem. This design delivers consistent wins in both RPS and latency, making it a popular choice for memory layers that demand real-time retrieval.

Milvus for large-scale memory

Milvus is the fastest when it comes to indexing time and maintains good precision. However, it's not on par with others when it comes to RPS or latency when you have higher-dimension embeddings or more vectors. For teams prioritizing rapid ingestion over query speed, Milvus is worth evaluating.

How do you add Cortex's memory layer to a GPT bot?

Cortex provides plug-and-play memory infrastructure designed for AI applications. It offers built-in long-term memory that evolves with every user interaction, dynamic retrieval, and personalization hooks for user preferences, intent, and history.

The following steps walk through a typical integration.

1. Install & authenticate Cortex SDK

Cortex now supports an embeddings endpoint fully compatible with OpenAI's API. Start by installing the SDK and authenticate with your API key. After installation, connect to your embeddings index.

For example, you can create embeddings with: client.embeddings.create(input="Roses are red, violets are blue, Cortex is great, and so is Jan too!", model="llama3.1:8b-gguf-q4-km")

Cortex also supports the same input types as OpenAI.

2. Store, retrieve & personalize memories

Cortex includes memory that improves over time. The system learns how individual users behave: what formats they prefer (e.g., tables, summaries), what content types they favor (e.g., spreadsheets, slides), and how they usually ask questions.

Consider a knowledge assistant for a sales team. Alex, a rep, prefers answers in bullet points and always looks for XLSX files. The AI starts recognizing this and automatically responds in bullet points while prioritizing spreadsheet sources. This personalization makes every interaction feel tailored instead of generic.

Key takeaway: Cortex doesn't just fetch documents. It learns, adapts, and gets smarter with time.

Should you use open-source memory layers like Mem0 or LangMem?

Open-source options offer flexibility but require more infrastructure work. The table below compares popular choices:

Tool | Best For | Trade-offs |

|---|---|---|

Mem0 | Production chat assistants with <2s SLA | Highest recall for the latency; graph variant consumes more tokens |

LangMem | Lightweight vector scan | Stalls at ~60s under heavy load |

MemGPT | Document-heavy agents | Virtual context management excels at deep memory retrieval |

Mem0 achieves 26% relative improvements in the LLM-as-a-Judge metric over OpenAI, while Mem0 with graph memory achieves around 2% higher overall score than the base configuration.

Mem0 & graph variant

"Mem0 attains a 91% lower p95 latency and saves more than 90% token cost, thereby offering a compelling balance between advanced reasoning capabilities and practical deployment constraints." - arXiv

Mem0's pipeline consists of two phases: Extraction and Update. This ensures only the most relevant facts are stored and retrieved, minimizing tokens and latency. The graph-enhanced variant adds explicit edges for temporal reasoning, boosting accuracy on time-sensitive queries.

LangMem API

LangMem helps agents learn and adapt from their interactions over time. It provides a core memory API that works with any storage system, memory management tools for active conversations, and a background memory manager that automatically extracts, consolidates, and updates agent knowledge.

For production, use AsyncPostgresStore or a similar DB-backed store to persist memories across server restarts. The agent gets to decide what and when to store the memory, with no special commands needed.

MemGPT for document-heavy agents

"MemGPT, or MemoryGPT, is a system specially designed for tasks like extended conversations and document analysis which are traditionally hindered by the limited context windows of modern Large Language Models (LLMs)." - Milvus Docs

MemGPT uses a technique inspired by hierarchical memory systems in traditional operating systems, called virtual context management. This approach shines when agents must analyze large documents or maintain very long conversation histories.

What pitfalls sabotage long-term GPT memory?

Even well-designed memory systems can fail. Watch for these traps:

Context poisoning: Hallucinations contaminate future reasoning, creating a feedback loop of increasingly inaccurate responses.

Memory inflation: Dialogue history and tool responses rapidly consume the finite context window and increase token cost, often necessitating forced truncation.

Contextual degradation: As older, potentially critical information is pushed out of the context window, the agent's ability to recall and leverage past knowledge diminishes.

Mitigation strategies include:

Implement intelligent decay mechanisms that prune low-utility memories

Use retrieval-augmented generation (RAG) for semantic knowledge access

Apply dynamic context pruning to remove irrelevant information

Validate retrieved memories before injecting them into prompts

Measuring success: accuracy, latency & token cost

To benchmark memory quality, evaluate across these dimensions:

Factual consistency after 600-turn chats

Sub-second responsiveness (p95 latency under 2s for production chat)

Token efficiency to keep bills manageable

Reasoning quality on single-hop, multi-hop, temporal, and open-domain questions

Requests-per-Second (RPS) measures throughput: serve more requests per second in exchange for individual requests taking longer. Latency measures how quickly individual requests complete.

The Letta Leaderboard measures memory management for three capabilities of a stateful agent: reading, writing, and updating. Top-performing models like Claude 4 Sonnet and GPT 4.1 consistently deliver high scores across core and archival memory tasks.

Build once, remember forever

Persistent LLM memory transforms a stateless chatbot into a system that learns and adapts. Key takeaways:

Fixed context windows cause bots to forget; external memory solves this.

Hybrid architectures combining episodic and semantic memory deliver the best results.

Vector database choice matters: Qdrant leads on RPS and latency; Milvus excels at indexing.

Open-source tools like Mem0 and LangMem offer flexibility but require infrastructure work.

Watch for context poisoning, memory inflation, and contextual degradation.

Cortex provides plug-and-play memory infrastructure with built-in long-term memory that evolves with every user interaction, personalization hooks, and a developer-first SDK. For teams that want persistent memory without managing the underlying complexity, Cortex offers a path to bots that remember.

Frequently Asked Questions

Why do GPT bots need persistent memory?

GPT bots need persistent memory to maintain consistency over prolonged dialogues. Without it, they forget user preferences and repeat questions, leading to a poor user experience.

What are the main types of memory architectures for AI agents?

The main types of memory architectures are episodic and semantic memory. Episodic memory stores event-specific data, while semantic memory stores factual knowledge independent of specific events.

Which vector database is best for a memory layer?

Qdrant is often preferred for its high requests-per-second (RPS) and low latency. Milvus is also popular for its fast indexing time, though it may lag in RPS and latency with high-dimensional embeddings.

How does Cortex's memory layer enhance GPT bots?

Cortex provides a plug-and-play memory infrastructure with built-in long-term memory, dynamic retrieval, and personalization hooks, allowing GPT bots to learn and adapt over time.

What are common pitfalls in long-term GPT memory systems?

Common pitfalls include context poisoning, memory inflation, and contextual degradation. These can be mitigated with intelligent decay mechanisms and retrieval-augmented generation.