Cortex vs Mem0 for LLM Memory: 2025 Features & Pricing

Cortex and Mem0 both provide memory infrastructure for LLM applications, but Cortex offers self-improving memory that learns user preferences while Mem0 focuses on static retrieval accuracy. Cortex includes plug-and-play integrations with enterprise tools and starts at $0 with $5 monthly credits, while Mem0's free tier limits usage to 25 notes monthly.

Key Facts

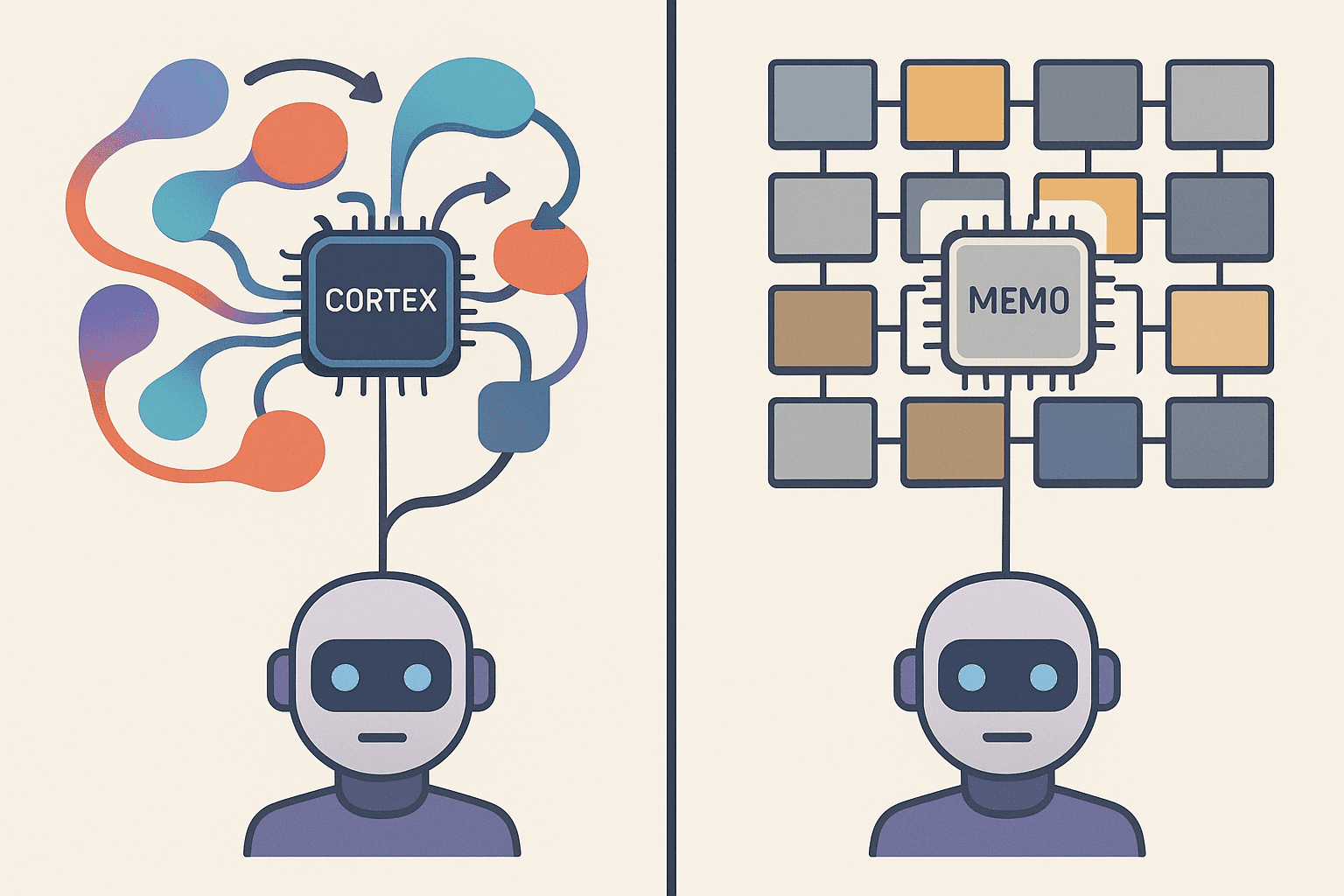

• Architecture differences: Cortex features dynamic memory that evolves with interactions, learning user preferences like format choices and content types, while Mem0 emphasizes retrieval accuracy without adaptive learning

• Integration complexity: Mem0 users report tool invocation issues starting from second interactions, requiring memory clearing between conversations to function properly

• Pricing models: Cortex offers pay-as-you-go starting at $0 with $5 monthly credits and unlimited workflows, versus Mem0's free tier capped at 25 notes and chat messages monthly

• Performance focus: Mem0 achieves 26% higher response accuracy on benchmarks with 0.148s retrieval latency, while Cortex prioritizes personalization that improves engagement through tailored responses

• Enterprise readiness: Both platforms provide SOC 2 compliance, but Cortex includes native connectors for Slack, Gmail, and Notion versus Mem0's SDK-based integrations

Modern AI agents live or die by the quality of their memory. In this head-to-head on Cortex vs Mem0, we'll decode which platform delivers smarter, cheaper, and more reliable LLM memory in 2025.

Why Compare Cortex and Mem0 in 2025?

The battle for next-generation LLM memory has intensified as AI agents become central to enterprise workflows. Both Cortex and Mem0 promise to solve the persistent context problem that breaks agents in production, but they approach it with fundamentally different architectures.

Cortex positions itself as plug-and-play memory infrastructure that powers intelligent, context-aware retrieval for any AI app or agent. The platform emphasizes dynamic retrieval, built-in long-term memory, and personalization hooks that evolve with user interactions.

Mem0, meanwhile, has emerged as a scalable memory-centric architecture that dynamically extracts, consolidates, and retrieves salient information from conversations. According to their published benchmarks, Mem0 leads overall in accuracy, striking the best balance across single-hop, multi-hop, and temporal reasoning tasks.

For CTOs and engineers building AI agents, the decision between these platforms hinges on three critical factors:

Architecture: Does the memory layer adapt and improve, or simply store and retrieve?

Integration: How quickly can you ship with your existing stack?

Price: What are the true costs at scale, including hidden infrastructure overhead?

How Do Memory Layers Super-Charge Large Language Models?

Memory layers use a trainable key-value lookup mechanism to add extra parameters to a model without increasing FLOPs. This approach fundamentally changes what LLMs can accomplish in production.

The rapid evolution of large language models has been marked by an exponential increase in their size, where models with tens of billions of parameters enhance capabilities but also place ever-greater demands on memory.

Memory layers address this by enabling efficient storage and retrieval without bloating context windows.

Research from Meta AI demonstrates that memory layers improve factual accuracy by over 100% as measured by factual QA benchmarks, while also improving significantly on coding and general knowledge tasks. Language models augmented with improved memory layers outperform dense models with more than twice the computation budget.

The core pillars of effective LLM memory include:

Pillar | Function | Impact |

|---|---|---|

Context Recall | Retrieving relevant past interactions | Enables continuity across sessions |

Temporal Reasoning | Understanding sequence and timing of events | Supports "before/after" queries |

Token Efficiency | Minimizing context window usage | Reduces costs and latency |

Key takeaway: Memory layers transform LLMs from stateless responders into systems that learn and adapt -- but the implementation determines whether that potential translates to production value.

Can Your Memory Learn? Cortex's Self-Improving Layer vs Mem0's Static Store

Cortex includes memory that improves over time. The system learns how individual users behave -- what formats they prefer, what content types they favor, and how they usually ask questions. According to Cortex documentation, this makes every interaction feel more personal, driving higher engagement and better results.

Mem0 describes itself as "a universal, self-improving memory layer for LLM applications." Their homepage claims the platform powers personalized AI experiences that cut costs and enhance user delight. However, the architecture tells a different story.

Mem0's pipeline consists of two phases -- Extraction and Update -- ensuring only the most relevant facts are stored and retrieved. While this optimizes for latency and token efficiency, the research paper focuses primarily on retrieval accuracy rather than adaptive learning from user behavior.

The distinction becomes clearer when examining real-world performance:

Mem0 achieves 26% higher response accuracy compared to OpenAI's memory on the LOCOMO benchmark

An agentic memory framework achieves 55% accuracy using 16x fewer tokens, relying on 2k-token memory instead of full 32k conversation histories

Research on MemGen shows dynamic memory frameworks can outperform static systems by up to 38.22%

Cortex's approach mirrors how humans adapt communication style based on who they're speaking with.

The platform recalls what users liked before, re-ranks answers based on user behavior, and offers personalized responses from evolving context.

Which Platform Integrates Faster with Real-World Stacks?

Cortex offers one-click connectors for the tools engineering teams already use:

Google Sheets, Drive, and Calendar

Gmail and Slack

Notion for knowledge management

OpenAI, Anthropic, Google AI, xAI, and Azure AI (bring your own API keys)

OpenRouter, Groq, and Mistral AI for model flexibility

Mem0 seamlessly integrates with popular AI frameworks including LangChain, LlamaIndex, and Vercel AI SDK.

The platform offers a framework-agnostic memory layer and universal integration through Mem0 MCP.

However, GitHub issues reveal friction points in Mem0's integration story. Users report encountering errors like "langchain_memgraph is not installed" despite following standard setup procedures.

A separate issue documents LangMem compatibility problems with LangGraph 1.0.0, surfacing import errors that block deployment.

For teams with aggressive timelines, these integration hiccups translate to days of debugging rather than hours to production.

Cortex's approach of native connectors -- rather than SDK wrappers -- reduces the surface area for compatibility issues.

Key takeaway: Both platforms support major frameworks, but Cortex's direct integrations with productivity tools (Slack, Gmail, Notion) reduce implementation complexity for enterprise deployments.

What Do Cortex and Mem0 Cost in 2025?

Cortex offers a straightforward pricing structure:

Plan | Cost | Includes |

|---|---|---|

Pay as you go | $0 commitment | $5 monthly credits, unlimited workflows, API access, email support |

Enterprise | Custom pricing | Large-scale custom workflows, fine-tuned models, premium support |

Mem0's pricing page reveals a different model:

Plan | Cost | Includes |

|---|---|---|

Free | $0 | 25 notes/month, 25 chat messages/month |

Mem Pro | $12/month | Unlimited notes, chat, collections, templates, API keys |

Mem Teams | Custom | Group billing, priority support, dedicated success manager, SLAs |

The hidden cost consideration lies in infrastructure overhead. Mem0 claims 90% savings in token usage compared to full-context methods, which translates to lower LLM API costs.

However, teams must factor in debugging time for integration issues and the opportunity cost of delayed deployments.

Cortex's $5 monthly credits with no commitment provides a lower barrier to experimentation. For teams validating memory architectures before scaling, this pay-as-you-go approach reduces financial risk.

Cortex vs Mem0 Benchmarks: Who Leads on Accuracy & Latency?

Public benchmarks provide concrete performance data for production planning.

Mem0's research demonstrates strong retrieval performance:

Lowest retrieval latency among tested methods (p50: 0.148s, p95: 0.200s)

66.9% accuracy with 0.71s median and 1.44s p95 end-to-end response time

Approximately 7k tokens per conversation on average

The LOCOMO benchmark results show Mem0 tops single and multi-hop tasks, with the graph-enhanced variant adding three extra points on temporal questions thanks to explicit edges.

However, selective retrieval keeps Mem0 interactive while LangMem's vector scan stalls at approximately 60 seconds.

Supermemory's research introduces another reference point, achieving state-of-the-art results on LongMemEval with particular strength in multi-session reasoning (71.43%) and temporal reasoning (76.69%) -- areas where standard vector-store approaches historically struggle.

"The ability to accurately recall user details, respect temporal sequences, and update knowledge over time is not a 'feature' -- it is a prerequisite for Agentic AI." -- Supermemory Research

For production deployments with sub-2-second SLAs, Mem0's dense variant offers the highest recall for the latency budget.

For CRM or legal timeline queries requiring temporal reasoning, Mem0's graph variant (Mem0ᴳ) provides edge traversal that solves "before/after X?" questions effectively.

Security, Privacy & Compliance Considerations

Cortex is SOC 2 Type II certified with backing from Microsoft for Startups, Google for Startups, and AWS Startups.

The platform provides enterprise-grade protection against unauthorized access, role-based access control, and secure Stripe payments maintaining PCI Level 1 compliance.

Mem0 is SOC 2 & HIPAA compliant with BYOK (bring your own key) support, ensuring data stays secure and audit-ready. The platform ships SOC 2, audit logs, and workspace governance by default.

However, memory-specific attack vectors present unique security challenges.

Research on LLM agent memory reveals significant privacy risks through memory extraction. The MEXTRA attack method demonstrates effectiveness at extracting private information from agent memory in black-box settings, highlighting the urgent need for robust memory safeguards.

A comprehensive threat model for GenAI agents identifies memory persistence as an underexplored attack vector.

Long-term memory -- a key feature of both Cortex and Mem0 -- creates novel risks that traditional security frameworks may not fully address:

Cognitive architecture vulnerabilities

Temporal persistence threats

Operational execution vulnerabilities

Trust boundary violations

Both platforms offer enterprise security foundations, but teams should implement additional safeguards specific to their memory architecture and data sensitivity requirements.

Making the Choice: When Cortex Edges Out Mem0

Cortex doesn't just fetch documents. It learns, adapts, and gets smarter with time. This architectural difference becomes decisive for specific use cases.

Choose Cortex when:

Your agents need to learn user preferences over months of interaction

You require native integrations with Slack, Gmail, and Notion

Self-improving personalization is a core product requirement

You want built-in long-term memory that evolves with every interaction

Consider Mem0 when:

Raw retrieval accuracy is the primary metric

Your team has bandwidth to debug integration issues

Temporal reasoning with graph-based memory fits your use case

You're already deep in the LangChain ecosystem

For CTOs building production AI agents in 2025, Cortex's combination of self-improving memory, native enterprise integrations, and transparent pricing positions it as the safer long-term bet.

The platform's plug-and-play architecture reduces time-to-production while its adaptive memory layer ensures agents actually get better over time -- not just bigger.

Explore Cortex's documentation to evaluate how the platform fits your agent architecture, or start with the pay-as-you-go tier to test memory performance against your specific workloads.

Frequently Asked Questions

What are the key differences between Cortex and Mem0?

Cortex offers a self-improving memory layer that adapts to user interactions, while Mem0 focuses on retrieval accuracy with a static memory store. Cortex integrates natively with tools like Slack and Gmail, whereas Mem0 provides a framework-agnostic memory layer.

How does Cortex's self-improving memory work?

Cortex's memory layer learns from user interactions, adapting to preferences and improving over time. This personalization enhances engagement and results, making it ideal for applications requiring long-term user interaction.

What integration challenges does Mem0 face?

Mem0 users have reported integration issues, such as errors with LangChain and LangMem compatibility. These challenges can lead to extended debugging times, impacting deployment schedules.

How do Cortex and Mem0 compare in terms of pricing?

Cortex offers a pay-as-you-go model with no commitment, providing $5 monthly credits. Mem0 has a free tier and a $12/month Pro plan, with custom pricing for teams. Cortex's model is more flexible for experimentation.

What security measures do Cortex and Mem0 implement?

Cortex is SOC 2 Type II certified, offering enterprise-grade security. Mem0 is SOC 2 and HIPAA compliant, with BYOK support. Both platforms address security, but memory-specific risks require additional safeguards.