How to Correct Incorrect LLM Memories [2026 Guide]

Correcting incorrect LLM memories requires detecting failures through benchmarks like LONGMEMEVAL, which shows 30% accuracy drops in commercial systems, then applying graph-based belief updating or dual-path retrieval strategies. Modern memory layers like Cortex achieve 90.23% accuracy on LongMemEval-s by embedding temporal reasoning and knowledge updates directly into the retrieval architecture.

At a Glance

• Memory failures stem from three core issues: parametric bias (models trusting training data over new context), imperfect retrieval introducing noise, and hallucination from knowledge gaps

• LONGMEMEVAL tests five critical abilities: information extraction, multi-session reasoning, temporal reasoning, knowledge updates, and knowing when not to answer

• Graph-based architectures like RecallM demonstrate 4x improvement over vector databases for updating stored knowledge

• Long-context LLMs show 30-60% performance drops on memory tasks involving 115k+ token contexts

• Cortex achieves 90.23% overall accuracy on LongMemEval-s benchmarks, with 90.97% on temporal reasoning and 94.87% on knowledge updates

• Effective correction requires dual-path retrieval, source verification, confidence scoring, and continuous learning from user feedback

Large-scale AI applications stumble when you must correct incorrect LLM memories. A single outdated fact, a misremembered user preference, or a failure to recognize that information has changed can cascade into costly errors, broken user trust, and agents that feel fundamentally unreliable. For teams shipping production AI, the cost of memory failures is measured in churn, support tickets, and lost revenue.

This guide breaks down why LLMs misremember, the common failure modes that lead to stale or wrong memories, practical detection strategies, and five proven correction techniques. We also compare the benchmarks that matter in 2026 and evaluate memory layer options, including how Cortex's architecture addresses the hardest memory challenges.

Why do large language models misremember?

Large language models do not have memory in the human sense. Instead, they rely on two sources of knowledge: parametric knowledge baked into their weights during training, and contextual knowledge provided at inference time through prompts, retrieval, or memory systems.

"Incorrect memories" in LLM stacks arise when these sources conflict, become outdated, or are retrieved improperly. Most retrieval systems fail because they fragment context, lose temporal relationships, and treat each query as a blank slate.

Existing LLMs usually remain static after deployment, which makes it hard to inject new knowledge into the model. When the world changes but the model does not, parametric knowledge drifts out of sync with reality. This is especially problematic for agents that must track evolving user preferences, updated facts, or shifting business data.

The ability to accurately recall user details, respect temporal sequences, and update knowledge over time is not a "feature" but rather "a prerequisite for Agentic AI," as Supermemory's research notes.

LONGMEMEVAL presents a significant challenge to existing long-term memory systems, with commercial chat assistants and long-context LLMs showing a 30% accuracy drop on memorizing information across sustained interactions. This benchmark, which tests five core memory abilities including temporal reasoning and knowledge updates, reveals just how brittle current approaches are.

What are the common failure modes in LLM memory?

Understanding why LLM memory fails is the first step toward correction. Three primary mechanisms account for most production errors:

Parametric bias. Models may not use new contextual knowledge if the wrong parametric answer also appears in context. Research across six question-answering datasets and five models shows this parametric bias phenomenon negatively affects a model's reading ability. The model essentially trusts its training data over the fresh evidence you provide.

Imperfect retrieval. Retrieval-augmented generation (RAG), while effective in integrating external knowledge to enhance LLMs, can be undermined by imperfect retrieval, which may introduce irrelevant, misleading, or even malicious information. When your retrieval pipeline surfaces the wrong documents, the model confidently generates answers grounded in noise.

Hallucination from knowledge gaps. Hallucination in generative AI refers to the generation of content that is fabricated, misleading, or not based on factual data. When memory systems fail to surface relevant context, models fill gaps with plausible-sounding but incorrect information.

These failure modes compound in multi-session scenarios. An agent that misremembers a user's address from six months ago and combines it with outdated product information can generate responses that are confidently wrong on multiple dimensions.

Key takeaway: Most memory errors stem from conflicts between parametric and contextual knowledge, retrieval noise, or gaps that trigger hallucination.

How to detect incorrect or stale memories?

Detection is where correction begins. Teams need systematic approaches to surface faulty memories before they reach users.

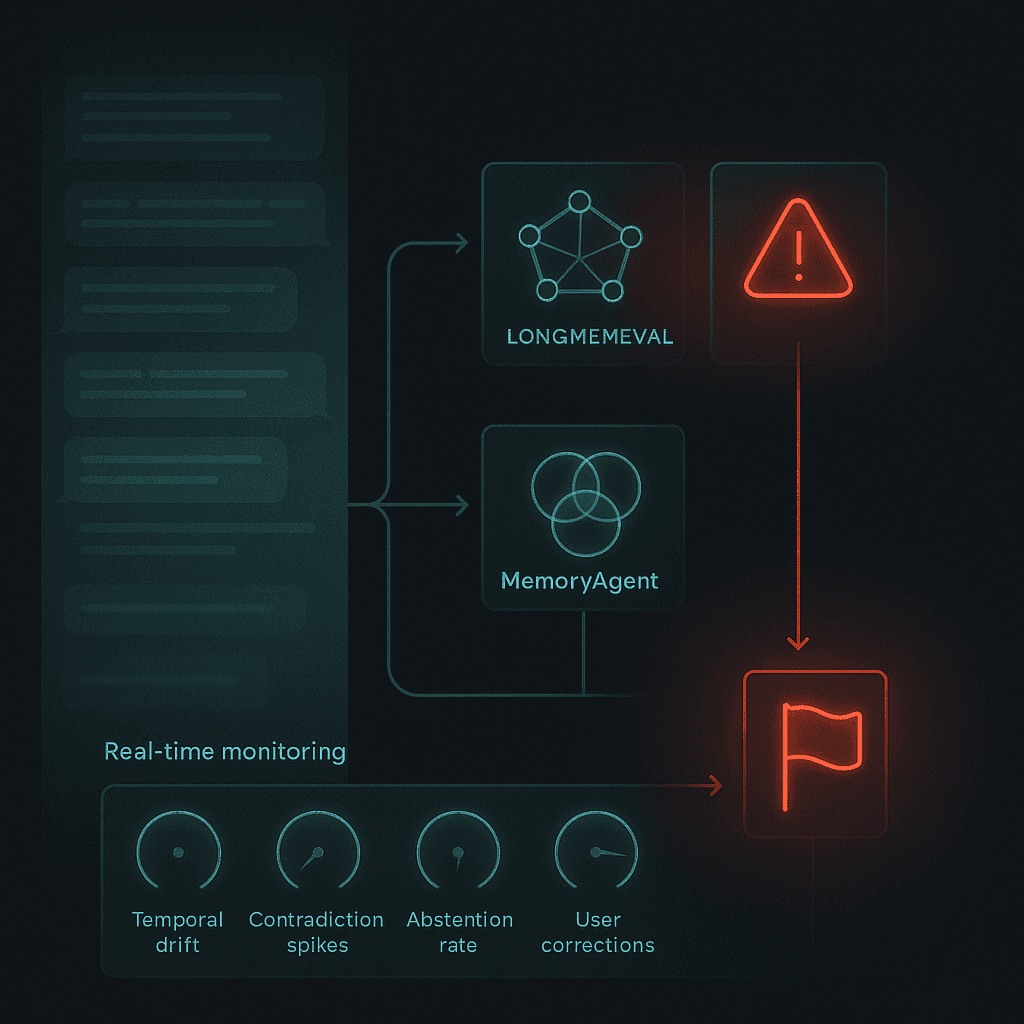

Benchmark-driven evaluation

LONGMEMEVAL remains the gold standard for evaluating long-term memory in chat assistants. It tests five core abilities:

Information extraction

Multi-session reasoning

Temporal reasoning

Knowledge updates

Abstention (knowing when not to answer)

The benchmark consists of 500 manually created questions embedded in chat histories averaging 115,000+ tokens. Long-context LLMs show a 30% to 60% performance drop on LONGMEMEVAL, making it an effective stress test.

Multi-competency assessment

MemoryAgentBench operationalizes memory quality along four axes:

Competency | What It Tests |

|---|---|

Accurate Retrieval (AR) | Extracting the correct snippet in response to a query |

Test-Time Learning (TTL) | Incorporating new behaviors during deployment without retraining |

Long-Range Understanding (LRU) | Integrating information across 100k+ token contexts |

Conflict Resolution (CR) | Revising or removing stored information when faced with contradictory evidence |

Critically, all paradigms exhibit dramatic failures on multi-hop conflict resolution: best accuracy remains at or below 6% for CR-MH, even in the most advanced models. This reveals where production systems are most vulnerable.

Practical detection signals

Beyond benchmarks, teams should monitor:

Temporal drift indicators: Queries about past states returning current values

Contradiction frequency: How often the agent contradicts prior statements

Abstention rates: Whether the system knows when it lacks sufficient information

User correction patterns: How often users correct agent responses

Which strategies actually correct incorrect LLM memories?

Once you detect faulty memories, you need concrete technical approaches to fix them. Five strategies have proven effective in production.

Graph-based belief updating (RecallM, EVOKG)

RecallM is four times more effective than using a vector database for updating knowledge previously stored in long-term memory. The architecture uses a graph database instead of a vector database, enabling it to capture and update complex relations between concepts through a lightweight neuro-symbolic approach.

RecallM's context revision step is necessary to update the beliefs of the system and implicitly "forget" information that is no longer relevant. The knowledge update process builds a persistent knowledge graph that can be used for many other applications.

For evolving knowledge, EVOKG is a noise-tolerant KG evolution method that constructs and updates the graph from unstructured documents. It resolves factual contradictions and models the temporal progression of non-static facts. The framework significantly enhances LLM reasoning, with gains of up to 23.3% in temporal reasoning tasks.

Dual-path retrieval (RF-Mem)

Not all queries require the same retrieval depth. RF-Mem (Recollection-Familiarity Memory Retrieval) introduces a familiarity uncertainty-guided dual-path approach:

Familiarity path: Fast, shallow retrieval for well-known queries

Recollection path: Slower, contextual reconstruction for uncertain or complex queries

RF-Mem measures the familiarity signal through mean score and entropy, then adaptively switches between paths. This preserves efficiency when familiarity is high while engaging structured recollection under unfamiliar conditions, embedding chain-like reasoning directly into the retriever.

Which benchmarks and metrics matter in 2026?

The benchmark landscape has matured significantly. Here's what teams should track:

LONGMEMEVAL

LONGMEMEVAL tests five core long-term memory abilities across 500 questions with ~115k tokens per problem. It remains essential because it evaluates the hardest production scenarios: multi-session reasoning, temporal accuracy, and knowledge updates.

Cortex achieved 90.23% overall accuracy on LongMemEval-s, the highest reported score to date, with particularly strong performance in temporal reasoning (90.97%), knowledge updates (94.87%), and user preference understanding.

MemoryAgentBench

MemoryAgentBench adds critical axes that LONGMEMEVAL misses, including test-time learning and conflict resolution. The finding that even advanced models score ≤6% on multi-hop conflict tasks highlights where the field needs to focus.

LoCoMo

Zep reports being 17% more accurate and 60% faster than Mem0 on the LoCoMo benchmark, which tests conversational memory retrieval. This benchmark is particularly useful for evaluating single-shot retrieval performance.

Key metrics to track

Metric | Why It Matters |

|---|---|

Temporal reasoning accuracy | Measures ability to handle "before/after" queries |

Knowledge update success rate | Tests whether new information overwrites old |

Multi-session recall | Evaluates memory persistence across conversations |

Conflict resolution accuracy | Critical for production reliability |

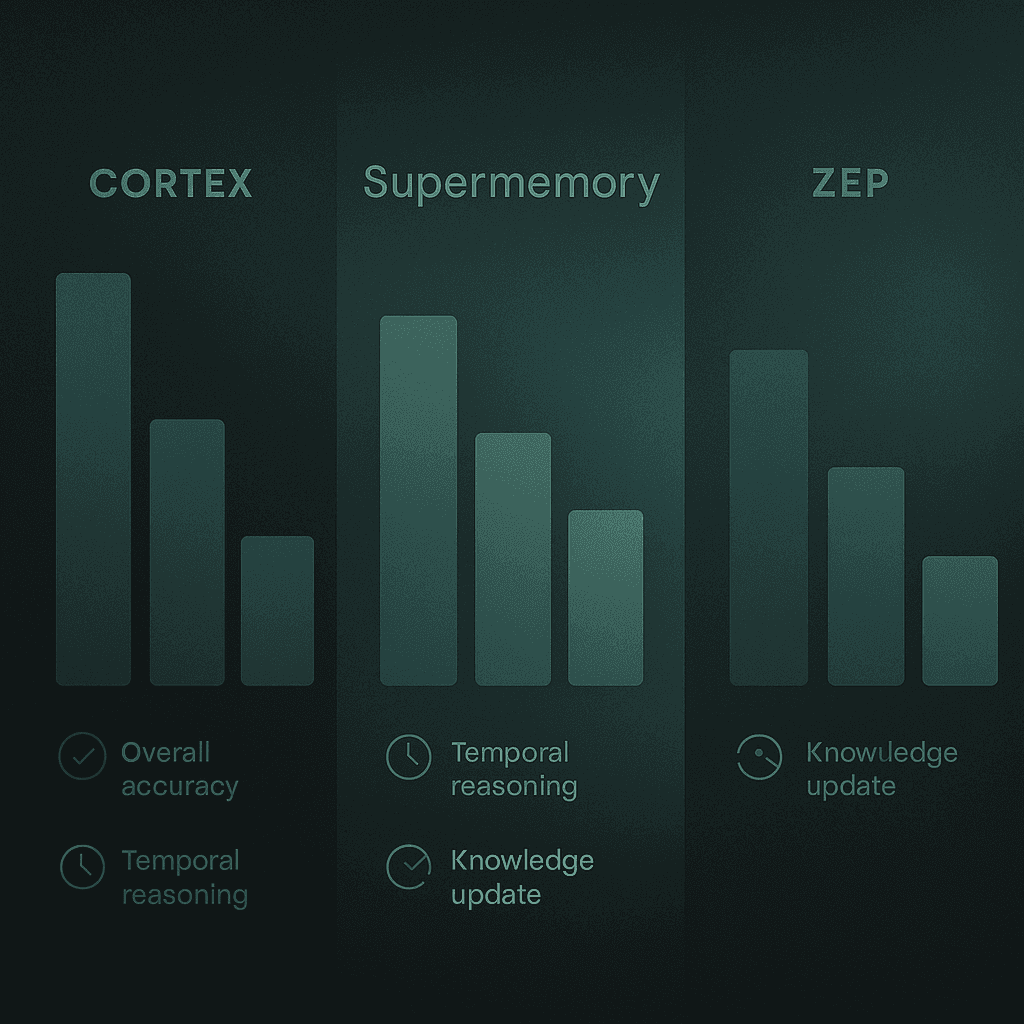

Cortex vs Zep vs Supermemory: which memory layer should you choose?

Choosing a memory layer requires understanding the tradeoffs between accuracy, latency, and architectural complexity.

Cortex

Cortex is a plug-and-play memory infrastructure that powers intelligent, context-aware retrieval. Key capabilities include:

Built-in long-term memory that evolves with every user interaction

Personalization hooks for user preferences, intent, and history

Dynamic retrieval and querying that always retrieves the most relevant context

Cortex achieved 90.23% overall accuracy on LongMemEval-s, outperforming alternatives in temporal reasoning (90.97% vs Zep's 62.4%) and knowledge updates (94.87% vs Zep's 83.3%). The platform treats memory as a first-class primitive, embedding it directly into the retrieval layer rather than bolting it on.

"Cortex doesn't just fetch documents. It learns, adapts, and gets smarter with time," as the documentation notes.

Zep

Zep uses a temporal knowledge graph architecture, delivering aggregate accuracy improvements of up to 18.5% over baseline, with response latency reduced by 90%. Its ability to maintain multiple temporal versions of facts and trace information lineage is valuable for audit-heavy use cases.

However, Zep's temporal reasoning accuracy (62.4% on LongMemEval-s) lags significantly behind Cortex's 90.97%, a critical gap for applications that depend on chronological accuracy.

Supermemory

Supermemory claims state-of-the-art results on LongMemEval-s with strength in multi-session (71.43%) and temporal reasoning (76.69%). The architecture uses chunk-based ingestion, relational versioning, and temporal grounding.

On LongMemEval-s, Supermemory achieved 85.2% overall accuracy compared to Cortex's 90.23%. The gap widens on temporal reasoning (81.95% vs 90.97%) and knowledge updates (89.74% vs 94.87%).

Comparison summary

Capability | Cortex | Supermemory | Zep |

|---|---|---|---|

Overall accuracy | 90.23% | 85.2% | 71.2% |

Temporal reasoning | 90.97% | 81.95% | 62.4% |

Knowledge updates | 94.87% | 89.74% | 83.3% |

Single-session (user facts) | 100% | 98.57% | 92.9% |

For teams prioritizing temporal accuracy and knowledge update reliability, the benchmark data points toward Cortex as the leading option.

How do you implement a self-correcting memory layer?

Implementing a self-correcting memory layer requires attention to architecture, personalization, and guard-rails.

Step 1: Choose the right retrieval architecture

Cortex uses an in-built reasoning engine that understands complex queries and automatically breaks them down into manageable, logical steps. This includes:

Sequential reasoning: Understanding when one piece of information depends on another

Parallel processing: Running multiple searches simultaneously for independent tasks

Context preservation: Maintaining relevant context across all steps

Dynamic adaptation: Adjusting the reasoning path based on intermediate results

Step 2: Enable self-improving personalization

Cortex includes memory that improves over time. The system learns how individual users behave, including what formats they prefer, what content types they favor, and how they usually ask questions.

For example, if you're building a knowledge assistant for a sales team and a rep prefers bullet-point answers and spreadsheet sources, the AI starts recognizing this and automatically responds accordingly.

Step 3: Implement memory for long-term personalization

Memory Bank is ideal for applications that require long-term personalization, LLM-driven knowledge extraction, and dynamic evolving context. Key features include:

Memory generation and extraction from conversations

Memory consolidation to prevent duplication

Automatic expiration of stale memories

Memory revisions when information changes

Step 4: Add guard-rails against memory poisoning

Memory poisoning occurs when false information is stored in the memory system. Mitigation strategies include:

Source verification before memory storage

Confidence scoring for new memories

Adversarial testing during development

User correction feedback loops

Implementation checklist

Select memory architecture (graph-based recommended for temporal accuracy)

Implement dual-path retrieval for efficiency

Enable self-improving personalization

Set up benchmark evaluation pipeline (LONGMEMEVAL, MemoryAgentBench)

Add monitoring for temporal drift and contradiction rates

Implement memory poisoning guard-rails

Key takeaways: building trustworthy, self-correcting AI memory

Correcting incorrect LLM memories requires a systematic approach across detection, correction, and continuous improvement:

Understand the failure modes: Parametric bias, imperfect retrieval, and hallucination from knowledge gaps account for most production errors.

Implement detection early: Use LONGMEMEVAL and MemoryAgentBench to surface memory failures before users encounter them.

Choose graph-based architectures: RecallM demonstrates 4x improvement over vector databases for knowledge updates. Cortex's temporal knowledge graph achieves 90.23% accuracy on LongMemEval-s.

Enable dual-path retrieval: RF-Mem's familiarity-uncertainty approach balances speed and accuracy.

Build for self-improvement: Systems that learn from successes and failures show durable gains.

Cortex memories update automatically through conversation, queries, and usage, giving your app the ability to learn, remember, and adapt. For teams building production AI agents where accuracy and personalization matter, a purpose-built memory layer like Cortex provides the foundation for trustworthy, self-correcting AI.

Frequently Asked Questions

Why do large language models misremember?

Large language models misremember due to conflicts between parametric and contextual knowledge, outdated information, and improper retrieval. These issues arise because LLMs rely on static parametric knowledge and dynamic contextual inputs, which can become misaligned over time.

What are the common failure modes in LLM memory?

Common failure modes in LLM memory include parametric bias, imperfect retrieval, and hallucination from knowledge gaps. These issues lead to incorrect or outdated information being used in responses, especially in multi-session scenarios.

How can incorrect or stale memories be detected in LLMs?

Incorrect or stale memories can be detected using benchmark-driven evaluations like LONGMEMEVAL, multi-competency assessments, and practical detection signals such as temporal drift indicators and contradiction frequency. These methods help identify memory failures before they impact users.

What strategies are effective for correcting incorrect LLM memories?

Effective strategies for correcting incorrect LLM memories include graph-based belief updating, dual-path retrieval, and implementing self-correcting memory layers. These approaches help update and maintain accurate knowledge over time.

How does Cortex address memory challenges in LLMs?

Cortex addresses memory challenges by providing a self-improving retrieval and memory layer that integrates seamlessly with AI models. It offers features like temporal reasoning, knowledge updates, and personalization, achieving high accuracy in benchmarks like LongMemEval-s.