How to Govern LLM Memory in Enterprise SaaS [2026 Guide]

Governing LLM memory in enterprise SaaS requires a three-layer framework combining policy, process, and platform capabilities. Leading solutions like Cortex achieve 90.23% accuracy on LongMemEval benchmarks, while enforcing access controls and temporal versioning to prevent data leaks and ensure compliance with regulations like GDPR, which can impose fines up to €20 million or 4% of global turnover.

Key Facts

• Enterprise AI agents require governed memory systems that handle temporal reasoning, knowledge updates, and multi-session continuity across 115k+ token conversations

• Ungoverned memory creates compliance risks, with regulators already issuing penalties ranging from €15 million (OpenAI) to $90 million (Two Sigma) for AI-related failures

• Commercial LLMs show a 30% accuracy drop when memorizing information across sustained interactions without proper memory governance

• Cortex leads memory benchmarks with 90.23% overall accuracy, outperforming alternatives like Supermemory (85.2%) and Zep (71.2%) on LongMemEval tests

• Effective governance requires policy definition, human oversight processes, and platform automation working together

• Real implementations like Workleap's SOC 2 transformation demonstrate 5 minutes saved per service and 1 day saved per quarterly workflow

Agentic AI has moved from research demos to production workloads. By 2028, Gartner projects that 33% of enterprise software will include agentic AI, up from less than 1% in 2024. These agents do not simply answer questions; they execute complex, multistep workflows across enterprise systems. That shift makes LLM memory governance table-stakes for security, compliance, and product reliability.

This guide explains why memory governance matters, what risks arise without it, which technical challenges complicate long-term recall, and how to build a governance framework that scales. It also compares leading memory platforms on public benchmarks and walks through a real-world deployment.

Why Does LLM Memory Governance Matter in 2026?

Modern AI is evolving from chatbots that generate content to agents that independently interact in a dynamic world. Those agents remember user preferences, track project state, and surface context from dozens of enterprise systems. Without governance, that memory becomes a liability rather than an asset.

"Data is your generative AI differentiator, and a successful generative AI implementation depends on a robust data strategy incorporating a comprehensive data governance approach."

AI governance, as IBM defines it, is "the ability to monitor and manage AI activities within an organization. It includes processes and procedures to trace and document the origin of data and models deployed within the enterprise; as well as the techniques used to train, validate, and monitor the continuing accuracy of models" (IBM).

LongMemEval, the benchmark introduced at ICLR 2025, tests exactly these memory capabilities across approximately 115k tokens per problem. Enterprises that ignore memory governance risk agents that hallucinate, leak data, or violate regulations.

Key takeaway: LLM memory governance is not optional overhead; it is the foundation that keeps agentic AI accurate, compliant, and trustworthy.

What Business & Compliance Risks Arise from Ungoverned Memory?

Ungoverned memory exposes enterprises to legal, financial, privacy, and reputational harm. The risks are not theoretical.

Data Exfiltration and Access Control Failures

Researchers have demonstrated data exfiltration attacks on AI assistants where adversaries exploit RAG architectures to leak sensitive information by leveraging the lack of access control enforcement. Fine-tuned models that learn from internal repositories can inadvertently surface confidential data to unauthorized users.

Regulatory Penalties

Regulators are watching. Italy's data protection agency fined OpenAI €15 million after finding that ChatGPT processed personal data without an adequate legal basis and violated transparency obligations. Under GDPR, any company found to have broken rules faces fines of up to €20 million or 4% of global turnover.

Enforcement Action | Penalty | Root Cause |

|---|---|---|

OpenAI / Italy DPA | €15 million | Inadequate legal basis for data processing |

Two Sigma / SEC | $90 million (civil penalties) | Failure to address model vulnerabilities |

Delphia & Global Predictions / SEC | $400,000 | False AI claims |

The SEC has taken action against investment advisers for AI-related failures. Two Sigma paid $90 million in civil penalties after failing to address known model vulnerabilities for years. Delphia and Global Predictions paid $400,000 in total civil penalties for misrepresenting their use of AI.

Reputational Damage

The OWASP Top 10 for LLM Applications Cybersecurity and Governance Checklist warns that hasty or insecure AI implementations can undermine corporate success. Trust erodes quickly when customers discover their data was mishandled or that an agent hallucinated critical information.

Key takeaway: Ungoverned memory is not just a technical debt; it is a compliance and reputational time bomb.

Which Technical Challenges Complicate Long-Term Memory Governance?

Even well-intentioned teams struggle with the technical realities of long-term memory. Three challenges dominate: long-term forgetting, temporal reasoning, and multi-session continuity.

LongMemEval shows that commercial chat assistants and long-context LLMs exhibit a 30% accuracy drop on memorizing information across sustained interactions. Agents forget what users told them, leading to repeated questions and inconsistent behavior.

Memory is not static. Users change jobs, update preferences, and revise facts. Benchmarks like MemoryAgentBench identify four core competencies essential for memory agents: accurate retrieval, test-time learning, long-range understanding, and selective forgetting. Most systems struggle with the temporal dimension, returning outdated information when users ask about past states.

Enterprise workflows span days or weeks. Research confirms that memory (encompassing how agents memorize, update, and retrieve long-term information) is under-evaluated due to the lack of benchmarks. Without explicit design for multi-session continuity, agents treat each conversation as a blank slate.

Security & Access Control

Security compounds every challenge above. RAG pipelines and fine-tuned models must enforce access control at every stage of processing, ensuring that any content incorporated into a model's output is authorized for all intended recipients.

A cross-prompt injection attack (XPIA) occurs when an adversary embeds hidden instructions into benign-looking content (such as emails) which are subsequently processed by an LLM during reply generation (Microsoft Research). Existing defense mechanisms remain inadequate for preventing the leakage of sensitive fine-tuning data, particularly in enterprise settings where data often encapsulates complex, structured information.

Key takeaway: Long-term memory requires purpose-built architectures that handle forgetting, temporal updates, multi-session continuity, and access control simultaneously.

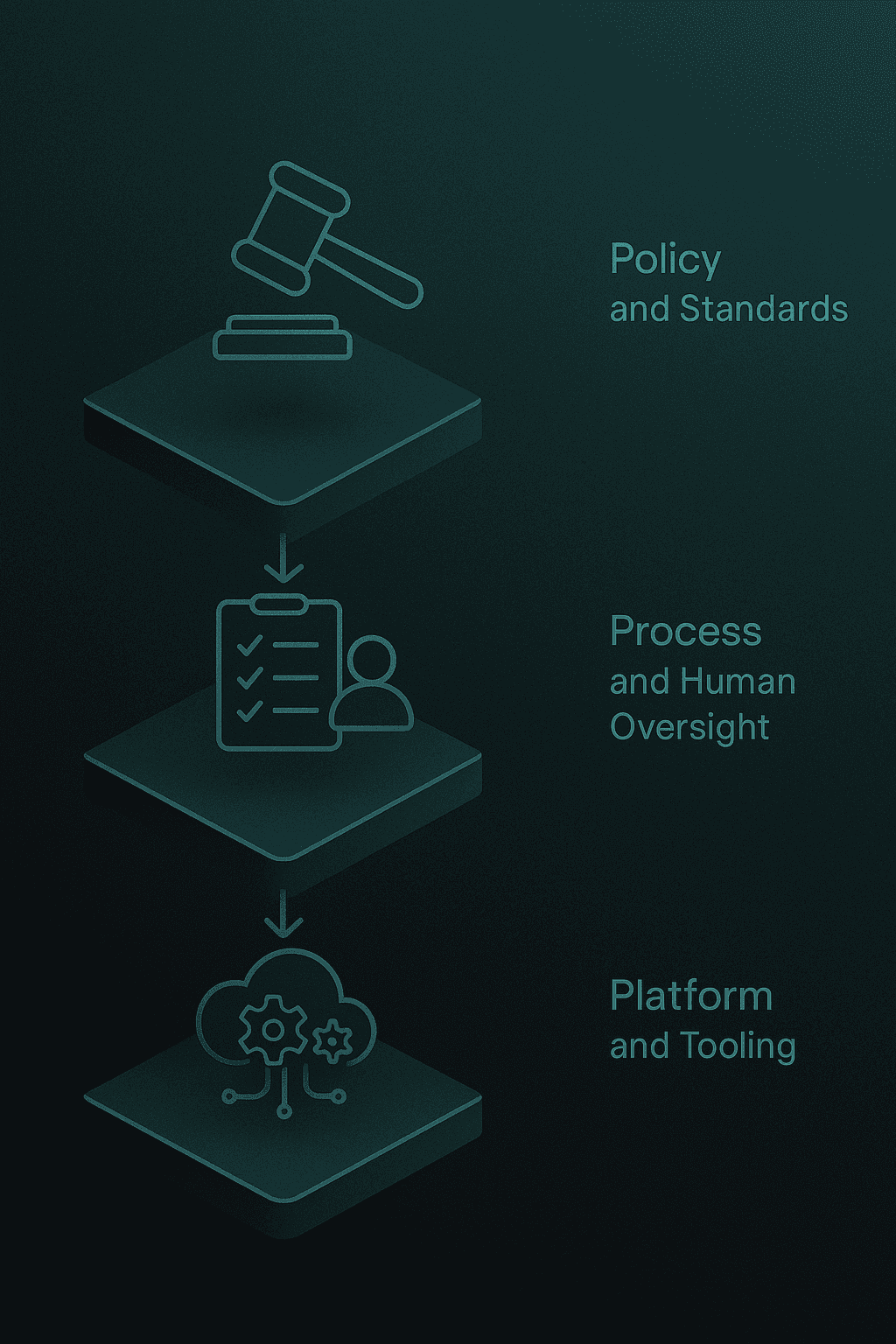

How Can Enterprises Apply a 3-Layer Governance Framework?

Effective governance spans three layers: policy, process, and platform. Each layer addresses different failure modes.

1. Policy & Standards

Policies define what the organization allows. Start with retention windows, audit trails, and privacy controls.

Model Monitoring actively monitors output to ensure that models are explainable, fair, and compliant with regulations (and remain so when deployed) (IBM).

Data governance is a critical building block, with two emerging areas of focus: managing unstructured data and enforcing access control within generative AI user workflows.

Document these policies in a central location and make them discoverable to every team building AI features.

2. Process & Human Oversight

Policies mean nothing without enforcement. Embed review loops, monitoring, and escalation paths into your development lifecycle.

Agent performance should be verified at each step of the workflow. Catching errors early prevents downstream harm.

Humans remain essential, but their roles and numbers will change. Oversight shifts from manual review to exception handling and audit.

Create dashboards that surface memory drift, access anomalies, and compliance violations in real time.

3. Platform & Tooling

The right platform automates what policies and processes define. A governed memory layer must provide:

Self-improving retrieval that learns from user interactions and relevance signals

Native memory and personalization embedded directly in the retrieval layer

Temporal versioning that preserves knowledge evolution without overwriting old facts

Metadata-first filtering that scopes results before retrieval

Full observability with audit logs and tracing

RecallM, for example, is four times more effective than using a vector database for updating knowledge previously stored in long-term memory. Graph-based architectures capture and update complex relations between concepts in a computationally efficient way.

Cortex embeds these capabilities into a single platform. The system learns how individual users behave (what formats they prefer, what content types they favor) and tailors responses accordingly. Parallel execution constraints around rate limits and cost are addressed using standard techniques such as model load balancing.

Key takeaway: Governance requires policy, process, and platform working together. No single layer is sufficient.

How to Evaluate Memory Solutions: Benchmarks & Metrics

Not all benchmarks test the same capabilities. Understanding what each measures helps you select the right platform.

Key Benchmarks

Benchmark | Focus | Challenge |

|---|---|---|

LongMemEval | Multi-session memory | ~115k tokens per problem; 30% accuracy drop for commercial LLMs |

LoCoMo | Long-context retrieval | Single-shot retrieval accuracy |

DMR (Deep Memory Retrieval) | Fact retrieval | Needle-in-a-haystack style tests |

LongMemEval is the gold standard for enterprise use cases. It tests information extraction, multi-session reasoning, temporal reasoning, knowledge updates, and abstention across 500 manually created questions.

"Zep is 17% more accurate and 60% faster" on the LoCoMo benchmark (Zep).

Supermemory achieves state-of-the-art results on LongMemEval_s, effectively solving the challenges of temporal reasoning and knowledge conflicts in high-noise environments.

Cortex vs. Leading Alternatives

Researchers benchmarked leading memory systems under the same datasets, metrics, and answer model.

System | LongMemEval Overall | Temporal Reasoning | Knowledge Updates |

|---|---|---|---|

Cortex AI | 90.23% | 90.97% | 94.87% |

Supermemory | 85.2% | 81.95% | 89.74% |

Zep | 71.2% | 62.4% | 83.3% |

Full Context | 60.2% | 45.1% | 78.2% |

Cortex achieved 90.23% overall accuracy on LongMemEval-s, the highest reported score to date. The platform shows particular strength in temporal reasoning and knowledge updates (the hardest production-critical areas).

Key takeaway: Benchmark selection matters. LongMemEval tests the memory capabilities that break production agents; Cortex leads on that benchmark.

Implementing a Governed Memory Layer with Cortex

Deploying a governed memory layer involves connecting data sources, defining access policies, and validating retrieval quality.

Step 1: Connect Enterprise Data Sources

Cortex connects directly to Gmail, Slack, Notion, Jira, databases, and files. For each source, the platform automatically applies source-aware parsing, segments content into context-preserving chunks, and enriches chunks with entity resolution and temporal markers.

Step 2: Define Access Control Policies

Map user roles to data access levels. Cortex enforces tenant isolation and strict access controls, ensuring that retrieved content is authorized for the requesting user.

Step 3: Validate Retrieval Quality

Run internal benchmarks against your own data. Measure accuracy, latency, and relevance before going live.

Case Study: Workleap's SOC 2 Transformation

Workleap, an AI-powered talent management platform, faced painful audit cycles with manual tracking and outdated documentation. After implementing Cortex:

"With Cortex, SOC 2 went from being a painful, high-effort process to something we could easily demo in real time to our auditors." (Sven Diebold, Manager of Platform Engineering, Workleap)

Results included:

5 minutes saved per service per Scorecard rule

1 day saved per workflow usage, per quarter

Real-time visibility for auditors in a single screen

Cortex provided a centralized solution ensuring Workleap was always ready for auditors.

Key takeaway: A governed memory layer pays dividends beyond AI accuracy (it streamlines compliance and reduces audit burden).

Best Practices & Common Pitfalls

Do

Enforce access control at every stage. RAG pipelines must ensure that retrieved content is authorized for all recipients.

Verify agent performance at each workflow step. Early error detection prevents cascading failures.

Build reusable agents. Achieving business value with agentic AI requires changing workflows, not just deploying new tools.

Govern unstructured data as rigorously as structured data. Unstructured data is typically not managed with the same rigor as structured data.

Avoid

Treating memory as an afterthought. Bolting on memory after launch leads to fragile architectures.

Ignoring temporal updates. Users change; your memory system must track those changes.

Skipping human oversight. Humans remain essential for exception handling and audit.

Using rule-based automation for complex, variable tasks. Reserve AI agents for workflows that require judgment.

The OWASP checklist is intended for teams striving to stay ahead in the fast-moving AI world, aiming not just to leverage AI for corporate success but also to protect against the risks of hasty implementations.

Key Takeaways

LLM memory governance is the difference between AI that scales and AI that fails. Enterprises need:

Clear policies defining retention, audit, and privacy controls

Embedded processes with human oversight and real-time monitoring

Purpose-built platforms that handle temporal reasoning, access control, and multi-session continuity

Cortex provides a centralized solution ensuring organizations are always ready for auditors while delivering self-improving personalization that makes every interaction feel tailored instead of generic.

For teams building production-grade AI agents, Cortex offers the highest benchmark performance, native memory and personalization, and enterprise-ready security. Start by auditing your current memory architecture against the LongMemEval categories, then evaluate whether your platform can match Cortex's 90.23% accuracy on the hardest production-critical tasks.

Frequently Asked Questions

What is LLM memory governance and why is it important?

LLM memory governance involves managing and monitoring AI memory to ensure compliance, security, and reliability. It's crucial for preventing data leaks, regulatory penalties, and maintaining trust in AI systems.

What are the risks of ungoverned LLM memory?

Ungoverned LLM memory can lead to data exfiltration, regulatory penalties, and reputational damage. Without proper governance, AI systems may leak sensitive information or violate compliance standards.

How does Cortex improve LLM memory governance?

Cortex offers a self-improving retrieval and memory platform that integrates memory governance, ensuring compliance and security. It provides features like temporal versioning, access control, and personalized memory management.

What are the technical challenges in LLM memory governance?

Key challenges include long-term forgetting, temporal reasoning, and multi-session continuity. These require architectures that handle memory updates, access control, and maintain session continuity.

How does Cortex compare to other memory solutions?

Cortex leads in benchmarks like LongMemEval with a 90.23% accuracy, outperforming competitors in temporal reasoning and knowledge updates, making it a top choice for enterprise AI memory governance.