5 Easy Ways to Increase LLM Memory for Beginners

Increasing LLM memory for beginners starts with addressing the key-value cache, which can consume tens of gigabytes for long contexts. Five practical approaches—KV cache quantization, semantic caching, attention optimization, model pruning, and prompt compression—can reclaim significant VRAM without requiring new hardware. KVQuant achieves less than 0.1 perplexity degradation at 3-bit precision, enabling million-token contexts on a single A100 GPU.

TLDR

Memory bottleneck: KV cache activations surface as the dominant contributor to memory consumption during inference, especially for large context windows

Quantization impact: KV cache quantization can achieve 68-78% total memory reduction with minimal accuracy degradation

Compression rates: AQUA-KV delivers near-lossless inference at 2-2.5 bits per value with under 1% relative error in perplexity

Practical deployment: Methods like KVQuant enable serving LLaMA-7B with up to 1 million tokens on a single A100-80GB GPU

Training efficiency: AQUA-KV can be calibrated on a single GPU within 1-6 hours, even for 70B models

RAM and VRAM have become the hardest constraints when engineers try to increase LLM memory. Recent demos showcasing 1-million-token contexts prove the potential, yet most teams still hit walls long before that mark. The good news: you do not need exotic hardware or a PhD in systems research. Five beginner-friendly fixes can reclaim gigabytes and unlock longer contexts on the GPUs you already own.

Why Memory Is the New Bottleneck for LLMs

Memory, not compute, now limits what you can ship. Over the past 20 years, peak server FLOPS has scaled at 3.0x every two years, while DRAM and interconnect bandwidth have grown at only 1.6x and 1.4x respectively. That widening gap makes memory the primary bottleneck, especially during inference.

The key-value (KV) cache is the usual culprit. LLMs are seeing growing use for applications which require large context windows, and with these large context windows KV cache activations surface as the dominant contributor to memory consumption during inference. A single Llama-405B user at 64K context consumes 15.75 GB of KV-cache; a 32-user batch swells that to over 500 GB.

For edge deployment, the math is even tighter. Smaller devices cannot simply scale out. Understanding where memory goes is step one; the next sections show how to claw it back.

Key takeaway: Memory bandwidth saturation, not compute, is the real ceiling. Address the KV cache first.

1. Shrink the KV Cache with Quantization

How do you fit a million-token context on one GPU? Compress the key-value vectors that eat most of your VRAM.

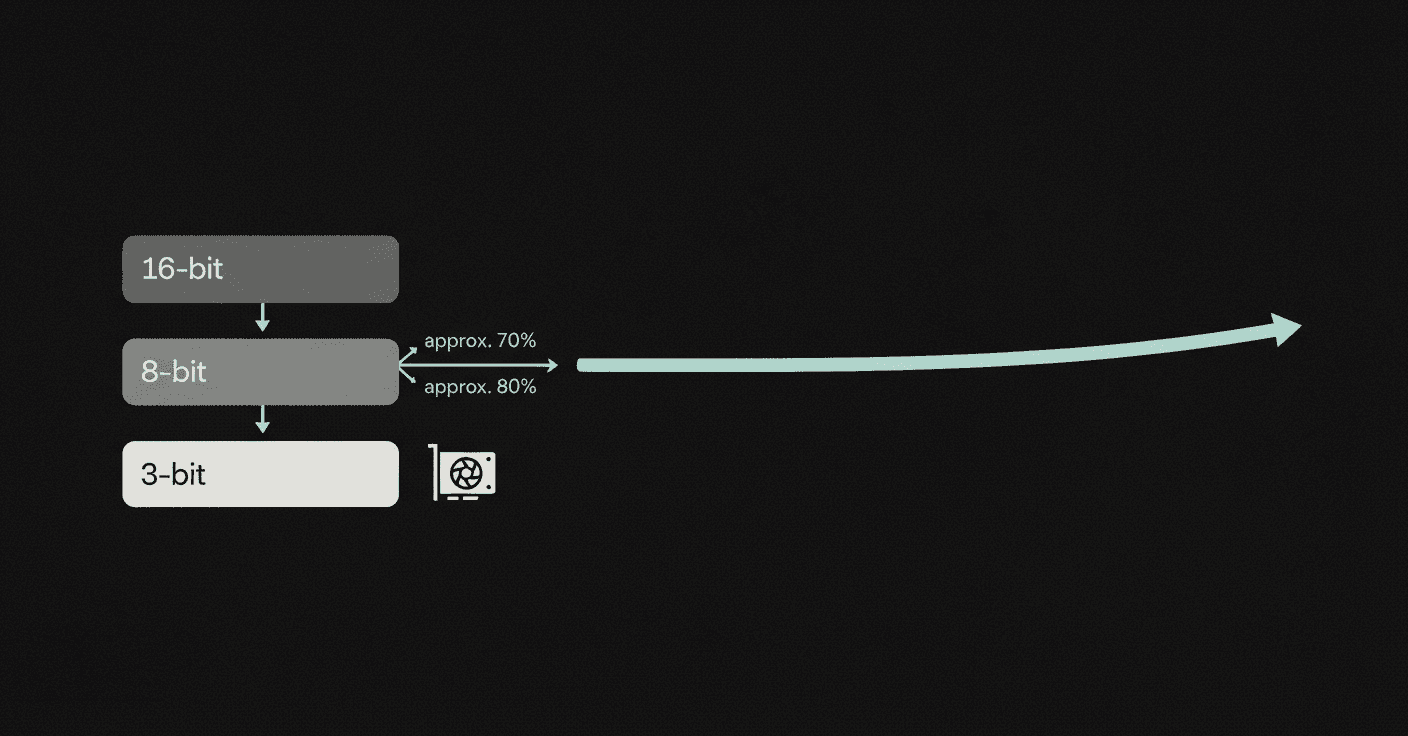

KV cache quantization encodes those vectors in 2-4 bits instead of 16, slashing memory while preserving accuracy. KVQuant, for example, achieves less than 0.1 perplexity degradation at 3-bit precision on Wikitext-2 and C4. Custom CUDA kernels deliver up to 1.7x speedups compared to baseline fp16 matrix-vector multiplications.

The KV Pareto framework maps Pareto-optimal configurations that achieve 68-78% total memory reduction with minimal (1-3%) accuracy degradation on long-context tasks. That reduction alone can turn an out-of-memory error into a working demo.

KV caches can occupy tens of gigabytes, storing vector representations for each token and layer. Cutting that footprint by three-quarters changes what fits on a single card.

Choosing a Quantizer (KVQuant, AQUA-KV, PM-KVQ)

Not all quantizers are equal. The table below compares three open-source options:

Quantizer | Bits | Calibration Time | Best For |

|---|---|---|---|

KVQuant | 3-4 | Hours on 1 GPU | General LLMs, million-token contexts |

AQUA-KV | 2-2.5 | Llama 3.2, near-lossless inference | |

PM-KVQ | 4/2 mixed | Short calibration | Long Chain-of-Thought, reasoning tasks |

AQUA-KV leverages dependencies between keys and values across layers to hit near-lossless inference with under 1% relative error in perplexity and LongBench scores. PM-KVQ targets reasoning workloads, improving benchmark performance by up to 8% over baselines while delivering 2.73-5.18x throughput over 16-bit models. TRIM-KV outperforms eviction baselines especially in low-memory regimes.

Pick the quantizer that matches your model family and task profile. For most beginners, KVQuant offers the smoothest on-ramp; AQUA-KV suits teams already on Llama 3.2.

2. Use Semantic Caching to Skip Re-Computation

Why re-run the model when you have already answered the same question? Semantic caching stores embeddings and responses so similar queries return instantly.

Unlike exact-match caching, semantic caching matches semantically equivalent queries regardless of phrasing. Production deployments report cache hit rates between 61.6% and 68.8%, cutting API calls by up to 68.8%. Response times improve from 2.7 seconds to 0.3 seconds, an 89% improvement.

Because cached responses skip the model entirely, semantic caching reduces both latency and memory pressure. Fewer concurrent inference requests mean fewer KV caches in flight.

Quick-Start with Redis LLMCache & LangCache

Redis offers two paths: LLMCache for self-managed setups and LangCache as a fully-managed service.

Install Redis with vector support (RediSearch).

Configure a similarity threshold (production deployments use 0.7-0.95).

On each query, embed the prompt and search cached embeddings.

On a hit, return the stored response. On a miss, call the LLM and store the result.

LLMCache can significantly reduce the latency of LLM responses by storing frequently accessed outputs. LangCache adds advanced cache management, simpler deployments, and lower LLM costs out of the box.

For AI gateways, agents, RAG applications, and chatbots, semantic caching delivers immediate savings with minimal code changes.

3. Optimize Attention Layers & Memory Management

Attention is the heart of the Transformer, but it is also the memory hog. Paging and parallel decoding tricks cut GPU RAM without touching model weights.

PagedAttention, inspired by operating-system virtual memory, divides the KV cache into blocks that can be allocated non-contiguously. vLLM, built on this algorithm, improves throughput by 2-4x compared to FasterTransformer and Orca with near-zero waste.

Flash-Decoding tackles the decode phase. It splits keys and values into smaller chunks, computes attention in parallel, and reduces final outputs with log-sum-exp scaling. The result: up to 8x faster generation for very long sequences, with almost constant run-time as sequence length scales to 64K.

vAttention goes further by keeping the KV cache contiguous in virtual memory while paging physical memory. This approach improves decode throughput by up to 1.99x over vLLM and end-to-end serving throughput by up to 1.29x.

PagedAttention vs. vAttention: Which One Wins for Beginners?

Feature | PagedAttention | vAttention |

|---|---|---|

Kernel rewrite required | Yes | No |

Virtual memory layout | Non-contiguous | Contiguous |

Throughput gain | 2-4x over baselines | |

Best for | Mature vLLM deployments | Drop-in upgrades, any attention kernel |

PagedAttention can sustain 32K-token contexts at high speed, and benchmarks show latency growing only linearly with sequence length from 128 to 2048 tokens. vAttention requires no changes to existing attention kernels, making it easier for beginners to adopt.

If you already run vLLM, PagedAttention is baked in. If you want to keep existing kernels, vAttention offers a simpler migration.

4. Prune, Distill & Deploy Smaller Models

Sometimes the fastest path to more memory is a smaller model. Pruning and distillation let you shrink parameters without starting from scratch.

Pruning an existing LLM and then re-training it with a fraction of the original training data can be a suitable alternative to repeated, full retraining. Deriving 8B and 4B models from a 15B checkpoint requires up to 40x fewer training tokens per model, slashing compute costs by 1.8x for the full model family.

For edge deployment, INT8/INT4 quantization can reduce model size by 75%+ while achieving 4x faster inference with negligible accuracy loss. TinyLlama, a compact 1.1B model pretrained on around 1 trillion tokens, demonstrates remarkable performance on downstream tasks and outperforms similarly sized open-source models.

Case Study: Minitron vs. Training from Scratch

The Minitron models illustrate the savings:

Token savings: 40x fewer training tokens per derived model.

Parameter savings: 2-4x compression from the parent Nemotron-4 family.

MMLU improvement: Up to 16% higher MMLU scores compared to models trained from scratch.

Minitron 8B achieves accuracy comparable to Mistral 7B, Gemma 7B, and Llama-3 8B, proving that pruning plus distillation delivers production-quality models at a fraction of the cost.

5. Compress Prompts Before They Hit the Model

Long prompts inflate the KV cache before the model generates a single token. Prompt compression shrinks input length while preserving semantic integrity.

LLMLingua introduces a coarse-to-fine compression method with a budget controller, token-level iterative compression, and instruction tuning for distribution alignment. Experiments show up to 20x compression with little performance loss on GSM8K, BBH, ShareGPT, and Arxiv-March23.

LLMLingua-2 speeds things up further. The model is 3x-6x faster than existing prompt compression methods while accelerating end-to-end latency by 1.6x-2.9x at compression ratios of 2x-5x.

For teams handling chain-of-thought prompts or in-context learning examples that exceed tens of thousands of tokens, prompt compression is a quick win. Compress before the model sees the input, and you reduce both memory and latency.

Bonus: Retrieval vs. Long-Context Models—Which Saves More Memory?

Do you need a million-token context window, or can retrieval give you the same results with less VRAM?

An LLM with a 4K context window using simple retrieval-augmentation can match a fine-tuned 16K model via positional interpolation, while taking much less computation. Retrieval-augmented Llama2-70B with a 32K window outperforms GPT-3.5-turbo-16k and is faster at generation than its non-retrieval baseline.

Latent-space memory offers another path. Research shows systems can extend knowledge retention from under 20K to over 160K tokens with similar GPU memory overhead. Specialized parametric memory modules deliver 2.5x faster inference than RAG with superior accuracy, making them a promising hybrid approach.

The choice depends on your workload. Retrieval suits document-grounded tasks where context changes per query. Long-context models shine when you need holistic reasoning across a fixed corpus. Many production systems combine both: retrieval narrows the corpus, then a long-context model reasons over the results.

Key Takeaways: Build a Memory-Savvy LLM Stack

Memory limits are real, but they are not insurmountable. The five techniques above -- KV cache quantization, semantic caching, attention optimizations, model pruning, and prompt compression -- can each reclaim tens of percentage points of VRAM. Combined, they turn a single-GPU deployment into a viable path for million-token contexts.

For teams building production AI agents, memory efficiency is not optional. Server FLOPS continue to outpace bandwidth growth, so the gap will only widen. Investing in memory-aware infrastructure now pays dividends as models grow.

Cortex approaches this challenge by combining enterprise data, context-aware knowledge graphs, and built-in memory into a single retrieval and memory platform. If you are looking for a production-grade layer that handles ingestion, search, personalization, and long-term memory out of the box, the Cortex documentation is a good next step.

Frequently Asked Questions

What is the main bottleneck for LLMs today?

Memory, not compute, is the primary bottleneck for LLMs, especially during inference. The key-value (KV) cache is a significant contributor to memory consumption, making it crucial to address for longer context windows.

How does KV cache quantization help increase LLM memory?

KV cache quantization compresses key-value vectors into 2-4 bits instead of 16, significantly reducing memory usage while maintaining accuracy. This technique can achieve up to 78% total memory reduction with minimal accuracy degradation.

What is semantic caching and how does it benefit LLMs?

Semantic caching stores embeddings and responses for semantically equivalent queries, reducing the need for re-computation. This approach cuts API calls, reduces latency, and alleviates memory pressure by decreasing concurrent inference requests.

How can attention layers be optimized to save memory?

Optimizing attention layers involves techniques like PagedAttention and Flash-Decoding, which manage memory more efficiently by dividing the KV cache into blocks and processing attention in parallel, respectively. These methods can significantly improve throughput and reduce memory usage.

What role does Cortex play in enhancing LLM memory?

Cortex provides a memory-first context and retrieval platform that integrates enterprise data, context-aware knowledge graphs, and built-in memory. It offers a production-grade solution for handling ingestion, search, personalization, and long-term memory, addressing memory challenges in AI applications.